Math Is Fun Forum

You are not logged in.

- Topics: Active | Unanswered

#1751 2023-04-26 16:23:39

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

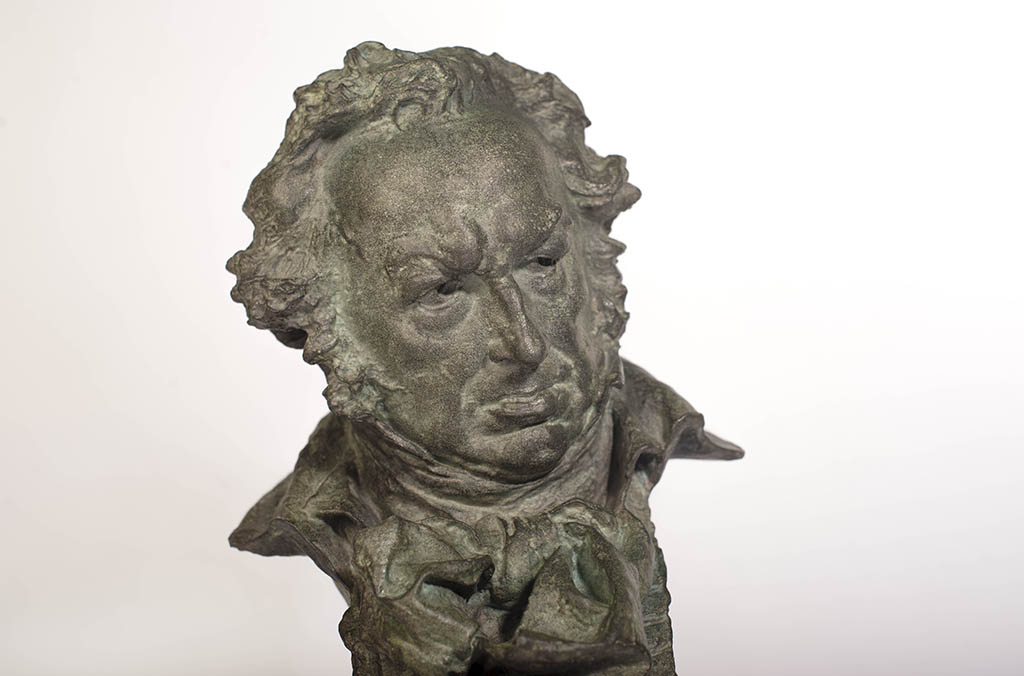

1754) Goya Awards

Gist

The Goya Awards (Spanish: Premios Goya) are Spain's main national annual film awards, commonly referred to as the Academy Awards of Spain. The awards were established in 1987, a year after the founding of the Academy of Cinematographic Arts and Sciences, and the first awards ceremony took place on March 16, 1987 at the Teatro Lope de Vega, Madrid. The ceremony continues to take place annually at Centro de Congresos Príncipe Felipe, around the end of January/beginning of February, and awards are given to films produced during the previous year.

Summary

Goya Awards : The Spanish Academy of Motion Picture Arts and Sciences gives out these prestigious awards every year to the best professionals in the different technical and creative categories in Spanish filmmaking.Its first edition took place in 1987. It has 28 different categories as well as the Goya of Honour, which recognises a whole lifetime dedicated to films. Some of the people who have won this award have been actors like Manuel Alexandre, José Sacristán, Ángela Molina, Pepa Flores (Marisol) and Alfredo Landa, or directors such as Mario Camus.Internationally-renowned professionals such as Pedro Almodóvar, Amenábar, Carmen Maura, Antonio Banderas, Javier Bardem and Penélope Cruz have also been award winners. It is, therefore, an award that celebrates the quality of Spanish films.

Details

The Goya Awards (Spanish: Premios Goya) are Spain's main national annual film awards, commonly referred to as the Academy Awards of Spain.

The awards were established in 1987, a year after the founding of the Academy of Cinematographic Arts and Sciences, and the first awards ceremony took place on March 16, 1987, at the Teatro Lope de Vega in Madrid. The ceremony continues to take place annually at Centro de Congresos Príncipe Felipe, around the end of January/beginning of February, and awards are given to films produced during the previous year.

The award itself is a small bronze bust of Francisco Goya created by the sculptor José Luis Fernández, although the original sculpture for the first edition of the Goyas was by Miguel Ortiz Berrocal.

History

To reward the best Spanish films of each year, the Spanish Academy of Motion Pictures and Arts decided to create the Goya Awards. The Goya Awards are Spain's main national film awards, considered by many in Spain, and internationally, to be the Spanish equivalent of the American Academy Awards. The inaugural ceremony took place on March 17, 1987, at the Lope de Vega theatre in Madrid. From the 2nd edition until 1995, the awards were held at the Palacio de Congresos in the Paseo de la Castellana. Then they moved to the similarly named Palacio Municipal de Congresos, also in Madrid. In 2000, the ceremony took place in Barcelona, at the Barcelona Auditorium. In 2003, a large number of film professionals took advantage of the Goya awards ceremony to express their opposition to the Aznar's government support of the U.S. invasion of Iraq. In 2004, the AVT (an association against terrorism in Spain) demonstrated against terrorism and ETA, a paramilitary organization of Basque separatists, in front of the Lope de Vega theatre. In 2005, José Luis Rodríguez Zapatero was the first prime minister in the history of Spain to attend the event. In 2013, the minister of culture and education José Ignacio Wert did not attend, saying he had “other things to do”. Some actors said that this decision reflected the government's lack of respect for their profession and industry. In the 2019 edition, the awards took place in Seville, and in 2020, the ceremony was held in Málaga.

Awards

The awards are currently delivered in 28 categories, excluding the Honorary Goya Award and the International Goya Award, with an increase of up to five nominees per category established for the upcoming 37th edition. There was a maximum of four candidates for each from the 13th Edition (having been three candidates in the first edition, five in the 2nd and 3rd edition and three from the fourth to the twelfth edition) to the 36th edition.

The Goya Awards (Spanish: Premios Goya) are Spain's main national annual film awards, commonly referred to as the Academy Awards of Spain. The awards were established in 1987, a year after the founding of the Academy of Cinematographic Arts and Sciences, and the first awards ceremony took place on March 16, 1987 at the Teatro Lope de Vega, Madrid. The ceremony continues to take place annually at Centro de Congresos Príncipe Felipe, around the end of January/beginning of February, and awards are given to films produced during the previous year. The award itself is a small bronze bust of Francisco Goya created by the sculptor , although the original sculpture for the first edition of the Goyas was by Miguel Ortiz Berrocal.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1752 2023-04-26 22:20:33

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1755) Data Entry

Summary

Data processing is manipulation of data by a computer. It includes the conversion of raw data to machine-readable form, flow of data through the CPU and memory to output devices, and formatting or transformation of output. Any use of computers to perform defined operations on data can be included under data processing. In the commercial world, data processing refers to the processing of data required to run organizations and businesses.

Details

Data entry is the process of digitizing data by entering it into a computer system for organization and management purposes. It is a person-based process and is "one of the important basic" tasks needed when no machine-readable version of the information is readily available for planned computer-based analysis or processing.

Sometimes what is needed is "information about information (that) can be greater than the value of the information itself." It can also involve filling in required information which is then "data-entered" from what was written on the research document, such as the growth in available items in a category. This is a higher level of abstraction than metadata, "information about data." Common errors in data entry include transposition errors, misclassified data, duplicate data, and omitted data, which are similar to bookkeeping errors.

Procedures

Data entry is often done with a keyboard and at times also using a mouse, although a manually-fed scanner may be involved.

Historically, devices lacking any pre-processing capabilities were used.

Keypunching

Data entry using keypunches was related to the concept of batch processing – there was no immediate feedback.

Computer keyboards

Computer keyboards and online data-entry provide the ability to give feedback to the data entry clerk doing the work.

Numeric keypads

The addition of numeric keypads to computer keyboards introduced quicker and often also less error-prone entry of numeric data.

Computer mouse

The use of a computer mouse, typically on a personal computer, opened up another option for doing data entry.

Touch screens

Touch screens introduced even more options, including the ability to stand and do data entry, especially given "a proper height of work surface when performing data entry."

Spreadsheets

Although most data entered into a computer are stored in a database, a significant amount is stored in a spreadsheet. The use of spreadsheets instead of databases for data entry can be traced to the 1979 introduction of Visicalc, and what some consider the wrong place for storing computational data continues.

Format control and specialized data validation are reasons that have been cited for using database-oriented data entry software.

Data managements

The search for assurance about the accuracy of the data entry process predates computer keyboards and online data entry. IBM even went beyond their 056 Card Verifier and developed their quieter IBM 059 model.

Modern techniques go beyond mere range checks, especially when the new data can be evaluated using probability about an event.

Assessment

In one study, a medical school tested its second year students and found their data entry skills – needed if they are to do small-scale unfunded research as part of their training – were below what the school considered acceptable, creating potential barriers.

Additional Information

Data Entry Operator responsibilities include:

*Entering customer and account data from source documents within time limits

* Compiling, verifying accuracy and sorting information to prepare source data for computer entry

* Reviewing data for deficiencies or errors, correcting any incompatibilities and checking output

* Research and obtain further information for incomplete documents

* Apply data program techniques and procedures

* Generate reports, store completed work in designated locations and perform backup operations

* Scan documents and print files, when needed

* Keep information confidential

* Respond to queries for information and access relevant files

* Comply with data integrity and security policies

* Ensure proper use of office equipment and address any malfunctions

Requirements and skills

* Proven data entry work experience, as a Data Entry Operator or Office Clerk

* Experience with MS Office and data programs

* Familiarity with administrative duties

* Experience using office equipment, like fax machine and scanner

* Typing speed and accuracy

* Excellent knowledge of correct spelling, grammar and punctuation

* Attention to detail

* Confidentiality

* Organization skills, with an ability to stay focused on assigned tasks

* High school diploma; additional computer training or certification will be an asset

Frequently asked questions

What does a Data Entry Operator do?

A Data Entry Operator compiles and verifies data to ensure accuracy while appropriately formatting it. This includes preparing documents for entry and transcribing from paper formats into computer files using manual entry or scanners.

What are the duties and responsibilities of a Data Entry Operator?

The duties of a Data Entry Operator include coding information, troubleshooting processing errors and achieving an organization's goals by completing the necessary tasks. They are also responsible for complying with data integrity and security policies, printing and scanning files and generating reports.

What makes a good Data Entry Operator?

A good Data Entry Operator requires strong attention to detail and excellent written and verbal communication skills. They should also have the ability to perform repetitive tasks with a high degree of accuracy in an ever-changing working environment.

Who does a Data Entry Operator work with?

A Data Entry Operator typically works alongside a team of fellow operators in an office setting. In addition, they work closely with various administrative persons such as an Office Clerk.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1753 2023-04-27 18:53:34

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1756) Hallucination

Summary

A hallucination is a perception in the absence of an external stimulus that has the qualities of a real perception. Hallucinations are vivid, substantial, and are perceived to be located in external objective space. Hallucination is a combination of two conscious states of brain wakefulness and REM (Rapid Eye Movement) sleep. They are distinguishable from several related phenomena, such as dreaming (REM sleep), which does not involve wakefulness; pseudohallucination, which does not mimic real perception, and is accurately perceived as unreal; illusion, which involves distorted or misinterpreted real perception; and mental imagery, which does not mimic real perception, and is under voluntary control. Hallucinations also differ from "delusional perceptions", in which a correctly sensed and interpreted stimulus (i.e., a real perception) is given some additional significance. Many hallucinations happen also during sleep paralysis.

Hallucinations can occur in any sensory modality—visual, auditory, olfactory, gustatory, tactile, proprioceptive, equilibrioceptive, nociceptive, thermoceptive and chronoceptive. Hallucinations are referred to as multimodal if multiple sensory modalities occur.

A mild form of hallucination is known as a disturbance, and can occur in most of the senses above. These may be things like seeing movement in peripheral vision, or hearing faint noises or voices. Auditory hallucinations are very common in schizophrenia. They may be benevolent (telling the subject good things about themselves) or malicious, cursing the subject. 55% of auditory hallucinations are malicious in content, for example, people talking about the subject, not speaking to them directly. Like auditory hallucinations, the source of the visual counterpart can also be behind the subject. This can produce a feeling of being looked or stared at, usually with malicious intent. Frequently, auditory hallucinations and their visual counterpart are experienced by the subject together.

Hypnagogic hallucinations and hypnopompic hallucinations are considered normal phenomena. Hypnagogic hallucinations can occur as one is falling asleep and hypnopompic hallucinations occur when one is waking up. Hallucinations can be associated with drug use (particularly deliriants), sleep deprivation, psychosis, neurological disorders, and delirium tremens.

The word "hallucination" itself was introduced into the English language by the 17th-century physician Sir Thomas Browne in 1646 from the derivation of the Latin word alucinari meaning to wander in the mind. For Browne, hallucination means a sort of vision that is "depraved and receive its objects erroneously".

Details

What Are Hallucinations?

If you're like most folks, you probably think hallucinations have to do with seeing things that aren't really there. But there's a lot more to it than that. It could mean you touch or even smell something that doesn't exist.

There are many different causes. It could be a mental illness called schizophrenia, a nervous system problem like Parkinson's disease, epilepsy, or of a number of other things.

If you or a loved one has hallucinations, go see a doctor. You can get treatments that help control them, but a lot depends on what's behind the trouble. There are a few different types.

Common Causes of Hallucinations

Hallucinations most often result from:

* Schizophrenia. More than 70% of people with this illness get visual hallucinations, and 60%-90% hear voices. But some may also smell and taste things that aren't there.

* Parkinson's disease. Up to half of people who have this condition sometimes see things that aren't there.

* Alzheimer's disease. and other forms of dementia, especially Lewy body dementia. They cause changes in the brain that can bring on hallucinations. It may be more likely to happen when your disease is advanced.

* Migraines. About a third of people with this kind of headache also have an "aura," a type of visual hallucination. It can look like a multicolored crescent of light.

* Brain tumor. Depending on where it is, it can cause different types of hallucinations. If it's in an area that has to do with vision, you may see things that aren't real. You might also see spots or shapes of light. Tumors in some parts of the brain can cause hallucinations of smell and taste.

* Charles Bonnet syndrome. This condition causes people with vision problems like macular degeneration, glaucoma, or cataracts to see things. At first, you may not realize it's a hallucination, but eventually, you figure out that what you're seeing isn't real.

* Epilepsy. The seizures that go along with this disorder can make you more likely to have hallucinations. The type you get depends on which part of your brain the seizure affects.

RELATED

Hearing Things (Auditory Hallucinations)

You may sense that the sounds are coming from inside or outside your mind. You might hear the voices talking to each other or feel like they're telling you to do something. Causes could include:

* Schizophrenia

* Bipolar disorder

* Psychosis

* Borderline personality disorder

* Posttraumatic stress disorder

* Hearing loss

* Sleep disorders

* Brain lesions

* Drug use

Seeing Things (Visual Hallucinations)

For example, you might:

* See things others don’t, like insects crawling on your hand or on the face of someone you know

* See objects with the wrong shape or see things moving in ways they usually don’t

* Sometimes they look like flashes of light. A rare type of seizure called "occipital" may cause you to see brightly colored spots or shapes.

Other causes include:

* Irritation in the visual cortex, the part of your brain that helps you see

* Damage to brain tissue (the doctor will call this lesions)

* Schizophrenia

* Schizoaffective disorder

* Depression

* Bipolar disorder

* Delirium (from infections, drug use and withdrawal, or body and brain problems)

* Dementia

* Parkinson’s disease

* Seizures

* Migraines

* Brain lesions and tumors

* Sleep problems

* Drugs that make you hallucinate

* Metabolism problems

* Creutzfeldt-Jakob disease

Smelling Things (Olfactory Hallucinations)

You may think the odor is coming from something around you, or that it's coming from your own body. Causes can include:

* Head injury

* Cold

* Temporal lobe seizure

* Inflamed sinuses

* Brain tumors

* Parkinson’s disease

Tasting Things (Gustatory Hallucinations)

You may feel that something you eat or drink has an odd taste. Causes can include:

* Temporal lobe disease

* Brain lesions

* Sinus diseases

* Epilepsy

Feeling Things (Tactile or Somatic Hallucinations)

You might think you're being tickled even when no one else is around, or you may feel like insects are crawling on or under your skin. You could feel a blast of hot air on your face that isn't real. Causes include:

* Schizophrenia

* Schizoaffective disorder

* Drugs that make you hallucinate

* Delirium tremens

* Alcohol

* Alzheimer's disease

* Lewy body dementia

* Parkinson's disease

Diagnosis and Treatment of Hallucinations

First, your doctor needs to find out what's causing your hallucinations. They'll ask about your medical history and do a physical exam. Then they'll ask about your symptoms.

RELATED

They may need to do tests to help figure out the problem. For instance, an EEG, or electroencephalogram, checks for unusual patterns of electrical activity in your brain. It could show if your hallucinations are due to seizures.

You might get an MRI, or magnetic resonance imaging, which uses powerful magnets and radio waves to make pictures of the inside of your body. It can find out if a brain tumor or something else, like an area that's had a small stroke, could be to blame.

Your doctor will treat the condition that's causing the hallucinations. This can include things like:

* Medication for schizophrenia or dementias like Alzheimer's disease

* Antiseizure drugs to treat epilepsy

* Treatment for macular degeneration, glaucoma, and cataracts

* Surgery or radiation to treat tumors

* Drugs called triptans, beta-blockers, or anticonvulsants for people with migraines

Your doctor may prescribe pimavanserin (Nuplazid). This medicine treats hallucinations and delusions linked to psychosis that affect some people with Parkinson’s disease.

Sessions with a therapist can also help. For example, cognitive behavioral therapy, which focuses on changes in thinking and behavior, helps some people manage their symptoms better.

Additional Information

Hallucination is the experience of perceiving objects or events that do not have an external source, such as hearing one’s name called by a voice that no one else seems to hear. A hallucination is distinguished from an illusion, which is a misinterpretation of an actual stimulus.

A historical survey of the study of hallucinations reflects the development of scientific thought in psychiatry, psychology, and neurobiology. By 1838 the significant relationship between the content of dreams and of hallucinations had been pointed out. In the 1840s the occurrence of hallucinations under a wide variety of conditions (including psychological and physical stress) as well as their genesis through the effects of such drugs as stramonium and hashish had been described.

French physician Alexandre-Jacques-François Brierre de Boismont in 1845 described many instances of hallucinations associated with intense concentration, or with musing, or simply occurring in the course of psychiatric disorder. In the last half of the 19th century, studies of hallucinations continued. Investigators in France were particularly oriented toward abnormal psychological function, and from this came descriptions of hallucinosis during sleepwalking and related reactions. In the 1880s English neurologist John Hughlings Jackson described hallucination as being released or triggered by the nervous system.

Other definitions of the term emerged later. Swiss psychiatrist Eugen Bleuler (1857–1939) defined hallucinations as “perceptions without corresponding stimuli from without,” while the Psychiatric Dictionary in 1940 referred to hallucination as the “apparent perception of an external object when no such object is present.” A spirited interest in hallucinations continued well into the 20th century. Sigmund Freud’s concepts of conscious and unconscious activities added new significance to the content of dreams and hallucinations. It was theorized that infants normally hallucinate the objects and processes that give them gratification. Although the notion has since been disputed, this “regression” hypothesis (i.e., that hallucinating is a regression, or return, to infantile ways) is still employed, especially by those who find it clinically useful. During the same period, others put forth theories that were more broadly biological than Freud’s but that had more points in common with Freud than with each other.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1754 2023-04-28 02:41:07

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1757) Balance of Payments

Summary

In international economics, the balance of payments (also known as balance of international payments and abbreviated BOP or BoP) of a country is the difference between all money flowing into the country in a particular period of time (e.g., a quarter or a year) and the outflow of money to the rest of the world. These financial transactions are made by individuals, firms and government bodies to compare receipts and payments arising out of trade of goods and services.

The balance of payments consists of two components: the current account and the capital account. The current account reflects a country's net income, while the capital account reflects the net change in ownership of national assets.

Details

The balance of payments (BOP) is the method countries use to monitor all international monetary transactions at a specific period. Usually, the BOP is calculated every quarter and every calendar year.

All trades conducted by both the private and public sectors are accounted for in the BOP to determine how much money is going in and out of a country. If a country has received money, this is known as a credit, and if a country has paid or given money, the transaction is counted as a debit.

Theoretically, the BOP should be zero, meaning that assets (credits) and liabilities (debits) should balance, but in practice, this is rarely the case. Thus, the BOP can tell the observer if a country has a deficit or a surplus and from which part of the economy the discrepancies are stemming.

* The balance of payments (BOP) is the record of all international financial transactions made by the residents of a country.

* There are three main categories of the BOP: the current account, the capital account, and the financial account.

* The current account is used to mark the inflow and outflow of goods and services into a country.

* The capital account is where all international capital transfers are recorded.

* In the financial account, international monetary flows related to investment in business, real estate, bonds, and stocks are documented.

* The current account should be balanced versus the combined capital and financial accounts, leaving the BOP at zero, but this rarely occurs.

The Balance of Payments Divided

The BOP is divided into three main categories: the current account, the capital account, and the financial account. Within these three categories are sub-divisions, each of which accounts for a different type of international monetary transaction.

The Current Account

The current account is used to mark the inflow and outflow of goods and services into a country. Earnings on investments, both public and private, are also put into the current account.

Within the current account are credits and debits on the trade of merchandise, which includes goods such as raw materials and manufactured goods that are bought, sold, or given away (possibly in the form of aid). Services refer to receipts from tourism, transportation (like the levy that must be paid in Egypt when a ship passes through the Suez Canal), engineering, business service fees (from lawyers or management consulting, for example), and royalties from patents and copyrights.

When combined, goods and services together make up a country's balance of trade (BOT). The BOT is typically the biggest bulk of a country's balance of payments as it makes up total imports and exports. If a country has a balance of trade deficit, it imports more than it exports, and if it has a balance of trade surplus, it exports more than it imports.

Receipts from income-generating assets such as stocks (in the form of dividends) are also recorded in the current account. The last component of the current account is unilateral transfers. These are credits that are mostly worker's remittances, which are salaries sent back into the home country of a national working abroad, as well as foreign aid that is directly received.

The Capital Account

The capital account is where all international capital transfers are recorded. This refers to the acquisition or disposal of non-financial assets (for example, a physical asset such as land) and non-produced assets, which are needed for production but have not been produced, like a mine used for the extraction of diamonds.

The capital account is broken down into the monetary flows branching from debt forgiveness, the transfer of goods, and financial assets by migrants leaving or entering a country, the transfer of ownership on fixed assets (assets such as equipment used in the production process to generate income), the transfer of funds received to the sale or acquisition of fixed assets, gift and inheritance taxes, death levies and, finally, uninsured damage to fixed assets.

The Financial Account

In the financial account, international monetary flows related to investment in business, real estate, bonds, and stocks are documented. Also included are government-owned assets, such as foreign reserves, gold, special drawing rights (SDRs) held with the International Monetary Fund (IMF), private assets held abroad, and direct foreign investment. Assets owned by foreigners, private and official, are also recorded in the financial account.

The Balancing Act

The current account should be balanced against the combined capital and financial accounts; however, as mentioned above, this rarely happens. We should also note that, with fluctuating exchange rates, the change in the value of money can add to BOP discrepancies.

If a country has a fixed asset abroad, this borrowed amount is marked as a capital account outflow. However, the sale of that fixed asset would be considered a current account inflow (earnings from investments). The current account deficit would thus be funded.

When a country has a current account deficit that is financed by the capital account, the country is actually foregoing capital assets for more goods and services. If a country is borrowing money to fund its current account deficit, this would appear as an inflow of foreign capital in the BOP.

Liberalizing the Accounts

The rise of global financial transactions and trade in the late-20th century spurred BOP and macroeconomic liberalization in many developing nations. With the advent of the emerging market economic boom, developing countries were urged to lift restrictions on capital- and financial-account transactions to take advantage of these capital inflows.

Some economists believe that the liberalization of BOP restrictions eventually lead to financial crises in emerging market nations, such as the Asian financial crisis.

Many of these countries had restrictive macroeconomic policies, by which regulations prevented foreign ownership of financial and non-financial assets. The regulations also limited the transfer of funds abroad.

With capital and financial account liberalization, capital markets began to grow, not only allowing a more transparent and sophisticated market for investors but also giving rise to foreign direct investment (FDI).

For example, investments in the form of a new power station would bring a country greater exposure to new technologies and efficiency, eventually increasing the nation's overall gross domestic product (GDP) by allowing for greater volumes of production. Liberalization can also facilitate less risk by allowing greater diversification in various markets.

The Bottom Line

The balance of payments (BOP) is the method by which countries measure all of the international monetary transactions within a certain period. The BOP consists of three main accounts: the current account, the capital account, and the financial account. The current account is meant to balance against the sum of the financial and capital account but rarely does.

Globalization in the late 20th-century led to BOP liberalization in many emerging market economies. These countries lifted restrictions on BOP accounts to take advantage of the cash flows arriving from foreign, developed nations, which in turn boosted their economies.

Importance of Balance of Payment (BOP)

Balance of Payment is a systematic record of all economic transactions which take place among the individuals of a country and the rest of the world.

A comprehensive set of accounts that tracks the flow of currency and other monetary assets coming in to and going out of a nation. These payments are used for international trade, foreign investments, and other financial activities. The balance of payments is divided into two accounts – current account (which includes payments for imports, exports, services, and transfers) and capital account (which includes payments for physical and financial assets). A deficit in one account is matched by a surplus in the other account. The balance of trade is only one part of the overall balance of payments set of accounts.

Importance of Balance of Payment (BOP):

(a) A country’s Balance of Payments reveals various aspects of a country’s international economic position. It presents the international financial position of the country. If the economy needs support in the form of imports, the government can prepare suitable policies to switch the funds and technology imported to the critical sectors of the economy that can constrain potential growth.

(b) It helps the government in taking decisions on monetary and fiscal policies on the one hand, and on external trade and payments issues on the other. It analyses the business transactions of any economy into exports and imports of goods and services for an exacting financial year. Here, the government can recognize the areas that have the possible for export-oriented growth and can prepare policies supporting those domestic industries.

(c) In the case of a developing country, the balance of payments shows the extent of dependence of the country’s economic development on the financial assistance by the developed countries. The government can also use the indications from Balance of Payments to discern the state of the economy and formulate its policies of inflation control, monetary and fiscal policies based on that.

(d) The greatest importance of the balance of payments lies in its serving as an indicator of changing the international economic position of a country. The balance of payments is the economic barometer which can be used to appraise a nation’s short-term international economic prospects, to evaluate the degree of its international solvency, and to determine the appropriateness of the exchange rate of country’s currency.

(e) However, a country’s favorable balance of payments cannot be taken as an indicator of economic prosperity nor the adverse and even the unfavorable balance of payments is not a reflection of bankruptcy. The government can adopt some defensive measures such as advanced tariff and duties on imports so as to discourage imports of non-essential items and encourage the domestic industries to be self-sufficient.

(f) A balance of payments deficit per se is not proof of the competitive weakness of a nation in foreign markets. However, the longer the balance of payments deficit continues, the more it would imply some fundamental problems in that economy.

(g) A favorable balance of payments should not always make a country complacent. A poor country may have a favorable balance of payments due to large .inflow of foreign loans and equity capital. A developed country may have an adverse balance of payments due to massive assistance given to developing countries.

(h) It does not provide data about assets and liabilities that relate one country to others. However, despite all these shortcomings, the significance of the balance of payments lies in the fact that it provides vital information to understand a country’s economic dealings with other countries.

(i) With the development of national income accounting, the balance of payments account has been used to calculate the control of foreign trade and transitions on the level of national income of the nation.

(j) If the country has a flourishing export trade, the government can adopt measures such as the devaluation of its currency to make its goods and services available in the international market at cheaper rates and bolster exports.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1755 2023-04-28 23:26:43

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1758) Neanderthal

Gist

Neanderthal is a Species of the human genus (Homo) that inhabited much of Europe, the Mediterranean lands, and Central Asia c. 200,000–24,000 years ago. The name derives from the discovery in 1856 of remains in a cave above Germany’s Neander Valley. Most scholars designate the species as Homo neanderthalensis and do not consider Neanderthals direct ancestors of modern humans (Homo sapiens); however, both species share a common ancestor that lived as recently as 130,000 years ago. Some scholars report evidence of limited interbreeding between Neanderthals and early modern humans of European and Asian stock. Neanderthals were short, stout, and powerful. Their braincases were long, low, and wide, and their cranial capacity equaled or surpassed that of modern humans. Their limbs were heavy, but they seem to have walked fully erect and had hands as capable as those of modern humans. They were cave dwellers who used fire, wielded stone tools and wooden spears to hunt animals, buried their dead, and cared for their sick or injured. They may have used language and may have practiced a primitive form of religion.

Summary

Neanderthals (Homo neanderthalensis or H. sapiens neanderthalensis), also written as Neandertals, are an extinct species or subspecies of archaic humans who lived in Eurasia until about 40,000 years ago. The reasons for Neanderthal extinction are disputed. Theories for their extinction include demographic factors such as small population size and inbreeding, competitive replacement, interbreeding and assimilation with modern humans, climate change, disease or a combination of these factors.

It is unclear when the line of Neanderthals split from that of modern humans; studies have produced various intervals ranging from 315,000 to more than 800,000 years ago. The date of divergence of Neanderthals from their ancestor H. heidelbergensis is also unclear. The oldest potential Neanderthal bones date to 430,000 years ago, but the classification remains uncertain. Neanderthals are known from numerous fossils, especially from after 130,000 years ago. The type specimen, Neanderthal 1, was found in 1856 in the Neander Valley in present-day Germany. For much of the early 20th century, European researchers depicted Neanderthals as primitive, unintelligent, and brutish. Although knowledge and perception of them has markedly changed since then in the scientific community, the image of the unevolved caveman archetype remains prevalent in popular culture.

Neanderthal technology was quite sophisticated. It includes the Mousterian stone-tool industry and ability to create fire and build cave hearths, make adhesive birch bark tar, craft at least simple clothes similar to blankets and ponchos, weave, go seafaring through the Mediterranean, and make use of medicinal plants, as well as treat severe injuries, store food, and use various cooking techniques such as roasting, boiling, and smoking. Neanderthals made use of a wide array of food, mainly hoofed mammals, but also other megafauna, plants, small mammals, birds, and aquatic and marine resources. Although they were probably apex predators, they still competed with cave bears, cave lions, cave hyenas, and other large predators. A number of examples of symbolic thought and Palaeolithic art have been inconclusively attributed to Neanderthals, namely possible ornaments made from bird claws and feathers or shells, collections of unusual objects including crystals and fossils, engravings, music production (possibly indicated by the Divje Babe flute), and Spanish cave paintings contentiously dated to before 65,000 years ago. Some claims of religious beliefs have been made. Neanderthals were likely capable of speech, possibly articulate, although the complexity of their language is not known.

Compared with modern humans, Neanderthals had a more robust build and proportionally shorter limbs. Researchers often explain these features as adaptations to conserve heat in a cold climate, but they may also have been adaptations for sprinting in the warmer, forested landscape that Neanderthals often inhabited. Nonetheless, they had cold-specific adaptations, such as specialised body-fat storage and an enlarged nose to warm air (although the nose could have been caused by genetic drift). Average Neanderthal men stood around 165 cm (5 ft 5 in) and women 153 cm (5 ft 0 in) tall, similar to pre-industrial modern humans. The braincases of Neanderthal men and women averaged about 1,600 cm3 (98 cu in) and 1,300 cu cm (79 cu in) respectively, which is considerably larger than the modern human average. The Neanderthal skull was more elongated and the brain had smaller parietal lobes and cerebellum, but larger temporal, occipital, and orbitofrontal regions.

The total population of Neanderthals remained low, proliferating weakly harmful gene variants and precluding effective long-distance networks. Despite this, there is evidence of regional cultures and thus of regular communication between communities. They may have frequented caves and moved between them seasonally. Neanderthals lived in a high-stress environment with high trauma rates, and about 80% died before the age of 40. The 2010 Neanderthal genome project's draft report presented evidence for interbreeding between Neanderthals and modern humans. It possibly occurred 316,000 to 219,000 years ago, but more likely 100,000 years ago and again 65,000 years ago. Neanderthals also appear to have interbred with Denisovans, a different group of archaic humans, in Siberia. Around 1–4% of genomes of Eurasians, Indigenous Australians, Melanesians, Native Americans, and North Africans is of Neanderthal ancestry, while the inhabitants of sub-Saharan Africa have only 0.3% of Neanderthal genes, save possible traces from early sapiens-to-Neanderthal gene flow and/or more recent back-migration of Eurasians to Africa. In all, about 20% of distinctly Neanderthal gene variants survive today. Although many of the gene variants inherited from Neanderthals may have been detrimental and selected out, Neanderthal introgression appears to have affected the modern human immune system, and is also implicated in several other biological functions and structures, but a large portion appears to be non-coding DNA.

Details

Neanderthal, (Homo neanderthalensis, Homo sapiens neanderthalensis), also spelled Neandertal, is a member of a group of archaic humans who emerged at least 200,000 years ago during the Pleistocene Epoch (about 2.6 million to 11,700 years ago) and were replaced or assimilated by early modern human populations (Homo sapiens) between 35,000 and perhaps 24,000 years ago. Neanderthals inhabited Eurasia from the Atlantic regions of Europe eastward to Central Asia, from as far north as present-day Belgium and as far south as the Mediterranean and southwest Asia. Similar archaic human populations lived at the same time in eastern Asia and in Africa. Because Neanderthals lived in a land of abundant limestone caves, which preserved bones well, and where there has been a long history of prehistoric research, they are better known than any other archaic human group. Consequently, they have become the archetypal “cavemen.” The name Neanderthal (or Neandertal) derives from the Neander Valley (German Neander Thal or Neander Tal) in Germany, where the fossils were first found.

Until the late 20th century, Neanderthals were regarded as genetically, morphologically, and behaviorally distinct from living humans. However, more recent discoveries about this well-preserved fossil Eurasian population have revealed an overlap between living and archaic humans. Neanderthals lived before and during the last ice age of the Pleistocene in some of the most unforgiving environments ever inhabited by humans. They developed a successful culture, with a complex stone tool technology, that was based on hunting, with some scavenging and local plant collection. Their survival during tens of thousands of years of the last glaciation is a remarkable testament to human adaptation.

First discoveries

The first human fossil assemblage described as Neanderthal was discovered in 1856 in the Feldhofer Cave of the Neander Valley, near Düsseldorf, Germany. The fossils, discovered by lime workers at a quarry, consisted of a robust cranial vault with a massive arched brow ridge, minus the facial skeleton, and several limb bones. The limb bones were robustly built, with large articular surfaces on the ends (that is, surfaces at joints that are typically covered with cartilage) and bone shafts that were bowed front to back. The remains of large extinct mammals and crude stone tools were discovered in the same context as the human fossils. Upon first examination, the fossils were deemed by anatomists as representing the oldest known human beings to inhabit Europe. Others disagreed and labeled the fossils H. neanderthalensis, a species distinct from H. sapiens. Some anatomists suggested that the bones were those of modern humans and that the unusual form was the result of pathology. This flurry of scientific debate coincided with the publication of On the Origin of Species (1859) by Charles Darwin, which provided a theoretical foundation upon which fossils could be viewed as a direct record of life over geologic time. When two fossil skeletons that resembled the original Feldhofer remains were discovered at Spy, Belgium, in 1886, the pathology explanation for the curious morphology of the bones was abandoned.

During the latter part of the 19th century and the early 20th century, additional fossils that resembled the Neanderthals from the Feldhofer and Spy caves were discovered, including those now in Belgium (Naulette), Croatia (Krapina), France (Le Moustier, La Quina, La Chapelle-aux-Saints and Pech de L’Azé), Italy (Guattari and Archi), Hungary (Subalyuk), Israel (Tabūn), the Czech Republic (Ochoz, Kůlna, and Sĭpka), the Crimea (Mezmaiskaya), Uzbekistan (Teshik-Tash), and Iraq (Shanidar). More recently, Neanderthals were discovered in the Netherlands (North Sea coast), Greece (Lakonis and Kalamakia), Syria (Dederiyeh), Spain (El Sidrón), and Russian Siberia (Okladnikov) and at additional sites in France (Saint Césaire, L’Hortus, and Roc de Marsal, near Les Eyzies-de-Tayac), Israel (Amud and Kebara), and Belgium (Scladina and Walou). Well over 200 individuals are represented, including over 70 juveniles. These sites range from nearly 200,000 years ago or earlier to 36,000 years before present, and some groups may have survived in the southern Iberian Peninsula until nearly 30,000–35,000 years ago or even possibly 28,000–24,000 years ago in Gibraltar. Most of the sites, however, are dated to approximately 120,000 to 35,000 years ago. The complete disappearance of the Neanderthals corresponds to, or precedes, the most recent glacial maximum—a time period of intense cold spells and frequent fluctuations in temperature beginning around 29,000 years ago or earlier—and the increasing presence and density in Eurasia of early modern human populations, and possibly their hunting dogs, beginning as early as 40,000 years ago.

Presumed ancestors of the Neanderthals were discovered at Sima de los Huesos (“Pit of the Bones”), at the Atapuerca site in Spain, dated to about 430,000 years ago, which yielded an impressive number of remains of all life stages. Sometimes these remains are attributed to H. heidelbergensis or archaic H. sapiens if one accepts Neanderthals as H. sapiens neanderthalensis—in other words, as a subspecies of modern humans. Presumed descendants of Neanderthals include a “love child” with both Neanderthal and modern human physical features from Portugal (Lagar Velho), dated to about 24,500 years ago.

What happened to the Neanderthals is one of the most-enduring questions in science, and it has been addressed in the early 21st century by applying genetic techniques to compare DNA from hundreds of living humans and ancient DNA recovered from Neanderthal fossils. The earliest genetic studies of Neanderthal mitochondrial DNA supported the idea that the origin of modern humans was a speciation event. More recently, however, it was reported that Eurasians generally carry about 2 percent Neanderthal nuclear DNA, which suggests that modern humans and Neanderthals interbred and thus were not two different biological species, despite most classifications treating them as such. It was previously argued on the basis of morphology that modern humans are distinct from Neanderthals, although the question of “how different is different” has always plagued debates on the apparent uniqueness of this fossil human group. When single specimens of Neanderthals and modern humans are compared, Neanderthals can easily be distinguished. When a broad range of individuals are examined, however, the variation observed fails to isolate Neanderthals as a group that is completely distinct from modern humans for every trait. Like Neanderthal fossils, early modern human fossils are robust in physical form, although they tend to differ from Neanderthal fossils in that they have a more juvenilized but large cranial vault coupled with a smaller face and a distinct mental trigone (chin).

Morphological traits:

Craniofacial features

Although Neanderthals possessed much in common physically with early modern humans, the constellation of Neanderthal features is unique, with much variation among individuals as far as craniofacial (head and facial) characteristics are concerned. Features of the cranium and lower jaw that were present more often in Neanderthals than in early and recent modern humans include a low-vaulted cranium, large orbital and nasal openings, and prominent arched brow ridges. A pronounced occipital region (the rear and base of the skull) served to anchor the large neck musculature. The cranial capacity of Neanderthals was similar to or larger than that of recent humans. The front teeth were larger than those in modern humans, but the molars and premolars were of a similar size. The lower jaw displayed a receding chin and was robustly built. The mental foramen, a small hole in the skull that allows nerves to reach the lower jaw, was placed farther back in Neanderthals than in recent humans, and a space between the last molar and the ascending edge of the lower jaw occurred in many individuals. There was also apparently less lumbar lordosis (back curvature) in Neanderthals and their predecessors from Sima de los Huesos than in modern humans.

Body proportions and cold stress

Neanderthals were a cold-adapted people. As with their facial features, Neanderthals’ body proportions were variable. However, in general, they possessed relatively short lower limb extremities, compared with their upper arms and legs, and a broad chest. Their arms and legs must have been massive and heavily muscled. This body build would have protected the extremities against damage from cold stress. Voluminous pulp cavities, or taurodontism, in the teeth may also have been an adaptation to cold temperatures or perhaps arose from genetic isolation. Cold stress may have delayed maturation in Neanderthal children, although earlier weaning and dental development have also been suggested from studies of teeth.

Other adaptations

Until the early 2000s, it was widely thought that Neanderthals lacked the capacity for complex communication, such as spoken language. Supporting that hypothesis was the fact that the flattened cranial base of Neanderthals—similar to that of modern infants prior to two years old—did not provide sufficient space for the production of vowels, which are used in all spoken languages of modern humans. However, studies beginning in the late 1980s of the hypoglossal canal (one of two small openings in the lower part of the skull) and a hyoid (the bone located between the base of the tongue and the larynx) from the paleoanthropological site at Kebara, Israel, suggested that the Neanderthal vocal tract could have been similar to that found in modern humans. Moreover, genetic studies in the early 2000s involving the Neanderthal FOXP2 gene (a gene thought to allow for the capacity for speech and language) indicated that Neanderthals probably used language in the same way that modern humans have. Such a deduction had also been extrapolated from interpretations of the complex behaviours of Neanderthals—such as the development of an advanced stone tool technology, the burial of the dead, and the care of injured social group members. It is not known, however, whether Neanderthals were capable of the full range of phonemes, or sound tones, that characterize the languages of modern humans. Handedness, which was inferred from dental wear resulting from items held in the mouth for processing, occurred among Neanderthals at a rate similar to that in modern humans and suggests a lateralization (functional separation) of the brain that is fundamental to language.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1756 2023-04-29 23:28:02

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1759) Cro-Magnons

Gist

Cro-Magnons were the first humans (genus Homo) to have a prominent chin. The brain capacity was about 1,600 cc (100 cubic inches), somewhat larger than the average for modern humans. It is thought that Cro-Magnons were probably fairly tall compared with other early human species.

Summary

Early European modern humans (EEMH), or Cro-Magnons, were the first early modern humans (Homo sapiens) to settle in Europe, migrating from Western Asia, continuously occupying the continent possibly from as early as 56,800 years ago. They interacted and interbred with the indigenous Neanderthals (H. neanderthalensis) of Europe and Western Asia, who went extinct 40,000 to 35,000 years ago. The first wave of modern humans in Europe from 45,000-40,000 (Initial Upper Paleolithic) left no genetic legacy to modern Europeans, but from 37,000 years ago a second wave succeeded in forming a single founder population, from which all EEMH descended and which contributes ancestry to present-day Europeans. Early European modern humans (EEMH) produced Upper Palaeolithic cultures, the first major one being the Aurignacian, which was succeeded by the Gravettian by 30,000 years ago. The Gravettian split into the Epi-Gravettian in the east and Solutrean in the west, due to major climate degradation during the Last Glacial Maximum (LGM), peaking 21,000 years ago. As Europe warmed, the Solutrean evolved into the Magdalenian by 20,000 years ago, and these peoples recolonised Europe. The Magdalenian and Epi-Gravettian gave way to Mesolithic cultures as big game animals were dying out and the Last Glacial Period drew to a close.

EEMH were anatomically similar to present-day Europeans, but were more robust, having larger brains, broader faces, more prominent brow ridges, and bigger teeth. The earliest EEMH specimens also exhibit some features that are reminiscent of those found in Neanderthals. The first EEMH would have had darker skin; natural selection for lighter skin would not begin until 30,000 years ago. Before the LGM (Last Glacial Maximum), EEMH had overall low population density, tall stature similar to post-industrial humans, and expansive trade routes stretching as long as 900 km (560 mi), and hunted big game animals. EEMH had much higher populations than the Neanderthals, possibly due to higher fertility rates; life expectancy for both species was typically under 40 years. Following the LGM, population density increased as communities travelled less frequently (though for longer distances), and the need to feed so many more people in tandem with the increasing scarcity of big game caused them to rely more heavily on small or aquatic game, and more frequently participate in game drive systems and slaughter whole herds at a time. The EEMH math included spears, spear-throwers, harpoons, and possibly throwing sticks and Palaeolithic dogs. EEMH likely commonly constructed temporary huts while moving around, and Gravettian peoples notably made large huts on the East European Plain out of mammoth bones.

EEMH are well renowned for creating a diverse array of artistic works, including cave paintings, Venus figurines, perforated batons, animal figurines, and geometric patterns. They may have decorated their bodies with ochre crayons and perhaps tattoos, scarification, and piercings. The exact symbolism of these works remains enigmatic, but EEMH are generally (though not universally) thought to have practiced shamanism, in which cave art — specifically of those depicting human/animal hybrids — played a central part. They also wore decorative beads, and plant-fibre clothes dyed with various plant-based dyes, which were possibly used as status symbols. For music, they produced bone flutes and whistles, and possibly also bullroarers, rasps, drums, idiophones, and other instruments. They buried their dead, though possibly only people who had achieved or were born into high status received burial.

Remains of Palaeolithic cultures have been known for centuries, but they were initially interpreted in a creationist model, wherein they represented antediluvian peoples which were wiped out by the Great Flood. Following the conception and popularisation of evolution in the mid-to-late 19th century, EEMH became the subject of much scientific racism, with early race theories allying with Nordicism and Pan-Germanism. Such historical race concepts were overturned by the mid-20th century. During the first wave feminism movement, the Venus figurines were notably interpreted as evidence of some matriarchal religion, though such claims had mostly died down in academia by the 1970s.

Details

Cro-Magnon is a population of early Homo sapiens dating from the Upper Paleolithic Period (c. 40,000 to c. 10,000 years ago) in Europe.

In 1868, in a shallow cave at Cro-Magnon near the town of Les Eyzies-de-Tayac in the Dordogne region of southwestern France, a number of obviously ancient human skeletons were found. The cave was investigated by the French geologist Édouard Lartet, who uncovered five archaeological layers. The human bones found in the topmost layer proved to be between 10,000 and 35,000 years old. The prehistoric humans revealed by this find were called Cro-Magnon and have since been considered, along with Neanderthals (H. neanderthalensis), to be representative of prehistoric humans. Modern studies suggest that Cro-Magnons emerged even earlier, perhaps as early as 45,000 years ago.

Cro-Magnons were robustly built and powerful and are presumed to have been about 166 to 171 cm (about 5 feet 5 inches to 5 feet 7 inches) tall. The body was generally heavy and solid, apparently with strong musculature. The forehead was straight, with slight browridges, and the face short and wide. Cro-Magnons were the first humans (genus Homo) to have a prominent chin. The brain capacity was about 1,600 cc (100 cubic inches), somewhat larger than the average for modern humans. It is thought that Cro-Magnons were probably fairly tall compared with other early human species.

It is still hard to say precisely where Cro-Magnons belong in recent human evolution, but they had a culture that produced a variety of sophisticated tools such as retouched blades, end scrapers, “nosed” scrapers, the chisel-like tool known as a burin, and fine bone tools (see Aurignacian culture). They also seem to have made tools for smoothing and scraping leather. Some Cro-Magnons have been associated with the Gravettian industry, or Upper Perigordian industry, which is characterized by an abrupt retouching technique that produces tools with flat backs. Cro-Magnon dwellings are most often found in deep caves and in shallow caves formed by rock overhangs, although primitive huts, either lean-tos against rock walls or those built completely from stones, have been found. The rock shelters were used year-round; the Cro-Magnons seem to have been a settled people, moving only when necessary to find new hunting or because of environmental changes.

Like the Neanderthals, the Cro-Magnon people buried their dead. Some of the first examples of art by prehistoric peoples are Cro-Magnon. The Cro-Magnons carved and sculpted small engravings, reliefs, and statuettes not only of humans but also of animals. Their human figures generally depict large-breasted, wide-hipped, and often obviously pregnant women, from which it is assumed that these figures had significance in fertility rites. Numerous depictions of animals are found in Cro-Magnon cave paintings throughout France and Spain at sites such as Lascaux, Eyzies-de-Tayac, and Altamira, and some of them are surpassingly beautiful. It is thought that these paintings had some magic or ritual importance to the people. From the high quality of their art, it is clear that Cro-Magnons were not primitive amateurs but had previously experimented with artistic mediums and forms. Decorated tools and weapons show that they appreciated art for aesthetic purposes as well as for religious reasons.

It is difficult to determine how long the Cro-Magnons lasted and what happened to them. Presumably they were gradually absorbed into the European populations that came later.

Additional Information

The earliest known Cro-Magnon remains are between 35,000 and 45,000 years old, based on radiometric dating. The oldest remains, from 43,000 – 45,000 years ago, were found in Italy and Britain. Other remains also show that Cro-Magnons reached the Russian Arctic about 40,000 years ago.

Cro-Magnons had powerful bodies, which were usually heavy and solid with strong muscles. Unlike Neanderthals, which had slanted foreheads, the Cro-Magnons had straight foreheads, like modern humans. Their faces were short and wide with a large chin. Their brains were slightly larger than the average human's is today.

Naming

The name "Cro-Magnon" was created by Louis Lartet, who discovered the first Cro-Magnon skull in southwestern France in 1868. He called the place where he found the skull Abri de Cro-Magnon. Abri means "rock shelter" in French; cro means "hole" in the Occitan language; and "Magnon" was the name of the person who owned the land where Lartet found the skull. Basically, Cro-Magnon means "rock shelter in a hole on Magnon's land."

This is why scientists now use the term "European early modern humans" instead of "Cro-Magnons." In taxonomy, the term "Cro-Magnon" means nothing.

Cro-Magnon life

* Used bones, shells, and teeth to make jewelry

* Spun, dyed, and tied knots in flax, to make cords for their tools, make baskets, or sew clothing

Like most early humans, the Cro-Magnons mostly hunted large animals. For example, they killed mammoths, cave bears, horses, and reindeer for food. They hunted with spears, javelins, and spear-throwers. They also ate fruits from plants.

The Cro-Magnons were nomadic or semi-nomadic. This means that instead of living in just one place, they followed the migration of the animals they wanted to hunt. They may have built hunting camps from mammoth bones; some of these camps were found in a village in Ukraine. They also made shelters from rocks, clay, tree branches, and animal skin (leather).

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1757 2023-04-30 23:02:17

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1760) Pulmonology

Gist

Pulmonology is a branch of medicine that deals with all diseases of the lungs, airways, and respiratory muscles. It includes asthma, bronchitis, interstitial lung disease, COPD, and sleep apnea.

Summary

Pulmonology or pneumonology is a medical specialty that deals with diseases involving the respiratory tract. It is also known as respirology, respiratory medicine, or chest medicine in some countries and areas.

Pulmonology is considered a branch of internal medicine, and is related to intensive care medicine. Pulmonology often involves managing patients who need life support and mechanical ventilation. Pulmonologists are specially trained in diseases and conditions of the chest, particularly pneumonia, asthma, tuberculosis, emphysema, and complicated chest infections.

Pulmonology/respirology departments work especially closely with certain other specialties: cardiothoracic surgery departments and cardiology departments.

History of pulmonology

One of the first major discoveries relevant to the field of pulmonology was the discovery of pulmonary circulation. Originally, it was thought that blood reaching the right side of the heart passed through small 'pores' in the septum into the left side to be oxygenated, as theorized by Galen; however, the discovery of pulmonary circulation disproves this theory, which had previously been accepted since the 2nd century. Thirteenth-century anatomist and physiologist Ibn Al-Nafis accurately theorized that there was no 'direct' passage between the two sides (ventricles) of the heart. He believed that the blood must have passed through the pulmonary artery, through the lungs, and back into the heart to be pumped around the body. This is believed by many to be the first scientific description of pulmonary circulation.

Although pulmonary medicine only began to evolve as a medical specialty in the 1950s, William Welch and William Osler founded the 'parent' organization of the American Thoracic Society, the National Association for the Study and Prevention of Tuberculosis. The care, treatment, and study of tuberculosis of the lung is recognised as a discipline in its own right, phthisiology. When the specialty did begin to evolve, several discoveries were being made linking the respiratory system and the measurement of arterial blood gases, attracting more and more physicians and researchers to the developing field.

Pulmonology and its relevance in other medical fields

Surgery of the respiratory tract is generally performed by specialists in cardiothoracic surgery (or thoracic surgery), though minor procedures may be performed by pulmonologists. Pulmonology is closely related to critical care medicine when dealing with patients who require mechanical ventilation. As a result, many pulmonologists are certified to practice critical care medicine in addition to pulmonary medicine. There are fellowship programs that allow physicians to become board certified in pulmonary and critical care medicine simultaneously. Interventional pulmonology is a relatively new field within pulmonary medicine that deals with the use of procedures such as bronchoscopy and pleuroscopy to treat several pulmonary diseases. Interventional pulmonology is increasingly recognized as a specific medical specialty.

Details

A pulmonologist is a doctor who diagnoses and treats diseases of the respiratory system -- the lungs and other organs that help you breathe.

For some relatively short-lasting illnesses that affect your lungs, like the flu or pneumonia, you might be able to get all the care you need from your regular doctor. But if your cough, shortness of breath, or other symptoms don't get better, you might need to see a pulmonologist.

What is pulmonology?

Internal medicine is the type of medical care that deals with adult health, and pulmonology is one of its many fields. Pulmonologists focus on the respiratory system and diseases that affect it. The respiratory system includes your:

* Mouth and nose

* Sinuses

* Throat (pharynx)

* Voice box (larynx)

* Windpipe (trachea)

* Bronchial tubes

* Lungs and things inside them like bronchioles and alveoli

* Diaphragm

What Conditions Do Pulmonologists Treat?

A pulmonologist can treat many kinds of lung problems. These include:

* Asthma, a disease that inflames and narrows your airways and makes it hard to breathe

* Chronic obstructive pulmonary disease (COPD), a group of lung diseases that includes emphysema and chronic bronchitis

* Cystic fibrosis, a disease caused by changes in your genes that makes sticky mucus build up in your lungs

* Emphysema, which damages the air sacs in your lungs

* Interstitial lung disease, a group of conditions that scar and stiffen your lungs

* Lung cancer, a type of cancer that starts in the lungs

* Obstructive sleep apnea, which causes repeated pauses in your breathing while you sleep

* Pulmonary hypertension, or high blood pressure in the arteries of your lungs

* Tuberculosis, a bacterial infection of the lungs

* Bronchiectasis, which damages your airways so they widen and become flabby or scarred

* Bronchitis, which is when your airways are inflamed, with a cough and extra mucus. It can lead to an infection.

* Pneumonia, an infection that makes the air sacs (alveoli) in your lungs inflamed and filled with pus

* COVID-19 pneumonia, which can cause severe breathing problems and respiratory failure

What Kind of Training Do Pulmonologists Have?

A pulmonologist's training starts with a medical school degree. Then, they do an internal medicine residency at a hospital for 3 years to get more experience. After their residency, doctors can get certified in internal medicine by the American Board of Internal Medicine.

That's followed by years of specialized training as a fellow in pulmonary medicine. Finally, they must pass specialty exams to become board-certified in pulmonology. Some doctors get even more training in Interventional Pulmonary, pulmonary hypertension, and lung transplantation. Others might specialize in younger or older patients.

RELATED

How Do Pulmonologists Diagnose Lung Diseases?

Pulmonologists use tests to figure out what kind of lung problem you have. They might ask you to get:

* Blood tests. They check levels of oxygen and other things in your blood.

* Bronchoscopy. It uses a thin, flexible tube with a camera on the end to see inside your lungs and airways.

* X-rays. They use low doses of radiation to make images of your lungs and other things in your chest.

* CT scan. It's a powerful X-ray that makes detailed pictures of the inside of your chest.

* Spirometry. This tests how well your lungs work by measuring how hard you can breathe air in and out.

What Kinds of Procedures Do Pulmonologists Do?

Pulmonologists can do special procedures such as:

* Pulmonary hygiene. This clears fluid and mucus from your lungs.

* Airway ablation. This opens blocked air passages or eases difficult breathing.

* Biopsy. This takes tissue samples to diagnose disease.

* Bronchoscopy. This looks inside your lungs and airways to diagnose disease.

Why See a Pulmonologist

You might see a pulmonologist if you have symptoms such as:

* A cough that is severe or that lasts more than 3 weeks

* Chest pain

* Wheezing

* Dizziness

* Trouble breathing

* Severe tiredness

* Asthma that’s hard to control

* Bronchitis or a cold that keeps coming back.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1758 2023-05-01 21:14:49

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 53,741

Re: Miscellany

1761) Passport

Details

A passport is an official travel document issued by a government that contains a person's identity. A person with a passport can travel to and from foreign countries more easily and access consular assistance. A passport certifies the personal identity and nationality of its holder. It is typical for passports to contain the full name, photograph, place and date of birth, signature, and the expiration date of the passport. While passports are typically issued by national governments, certain subnational governments are authorised to issue passports to citizens residing within their borders.

Many nations issue (or plan to issue) biometric passports that contain an embedded microchip, making them machine-readable and difficult to counterfeit. As of January 2019, there were over 150 jurisdictions issuing e-passports. Previously issued non-biometric machine-readable passports usually remain valid until their respective expiration dates.

A passport holder is normally entitled to enter the country that issued the passport, though some people entitled to a passport may not be full citizens with right of abode (e.g. American nationals or British nationals). A passport does not of itself create any rights in the country being visited or obligate the issuing country in any way, such as providing consular assistance. Some passports attest to the bearer having a status as a diplomat or other official, entitled to rights and privileges such as immunity from arrest or prosecution.

History

One of the earliest known references to paperwork that served in a role similar to that of a passport is found in the Hebrew Bible. Nehemiah 2:7–9, dating from approximately 450 BC, states that Nehemiah, an official serving King Artaxerxes I of Persia, asked permission to travel to Judea; the king granted leave and gave him a letter "to the governors beyond the river" requesting safe passage for him as he traveled through their lands.

The Arthashastra (c. 3rd century BC) make mentions of passes issued at the rate of one masha per pass to enter and exit the country. Chapter 34 of the Second Book of Arthashastra concerns with the duties of the Mudrādhyakṣa (lit. 'Superintendent of Seals') who must issue sealed passes before a person could enter or leave the countryside.

Passports were an important part of the Chinese bureaucracy as early as the Western Han (202 BC – 9 AD), if not in the Qin Dynasty. They required such details as age, height, and bodily features. These passports (zhuan) determined a person's ability to move throughout imperial counties and through points of control. Even children needed passports, but those of one year or less who were in their mother's care may not have needed them.

In the medieval Islamic Caliphate, a form of passport was the bara'a, a receipt for taxes paid. Only people who paid their zakah (for Muslims) or jizya (for dhimmis) taxes were permitted to travel to different regions of the Caliphate; thus, the bara'a receipt was a "basic passport."

Etymological sources show that the term "passport" is from a medieval italian document that was required in order to pass through the harbors customs (Italian "passa porto", to pass the harbor) or through the gate (Italian "passa porte", to pass the gates) of a city wall or a city territory. In medieval Europe, such documents were issued by local authorities to foreign travellers (as opposed to local citizens, as is the modern practice) and generally contained a list of towns and cities the document holder was permitted to enter or pass through. On the whole, documents were not required for travel to sea ports, which were considered open trading points, but documents were required to pass harbor controls and travel inland from sea ports. The transition from private to state control over movement was an essential aspect of the transition from feudalism to capitalism. Communal obligations to provide poor relief were an important source of the desire for controls on movement.

In the 12th century, the Republic of Genoa issued a document called Bulletta, which was issued to the nationals of the Republic who were traveling to the ports of the emporiums and the ports of the Genoese colonies overseas, as well as to foreigners who entered them.

King Henry V of England is credited with having invented what some consider the first British passport in the modern sense, as a means of helping his subjects prove who they were in foreign lands. The earliest reference to these documents is found in a 1414 Act of Parliament. In 1540, granting travel documents in England became a role of the Privy Council of England, and it was around this time that the term "passport" was used. In 1794, issuing British passports became the job of the Office of the Secretary of State. The 1548 Imperial Diet of Augsburg required the public to hold imperial documents for travel, at the risk of permanent exile.

In 1791, Louis XVI masqueraded as a valet during his Flight to Varennes as passports for the nobility typically included a number of persons listed by their function but without further description.

A Pass-Card Treaty of October 18, 1850 among German states standardized information including issuing state, name, status, residence, and description of bearer. Tramping journeymen and jobseekers of all kinds were not to receive pass-cards.

A rapid expansion of railway infrastructure and wealth in Europe beginning in the mid-nineteenth century led to large increases in the volume of international travel and a consequent unique dilution of the passport system for approximately thirty years prior to World War I. The speed of trains, as well as the number of passengers that crossed multiple borders, made enforcement of passport laws difficult. The general reaction was the relaxation of passport requirements. In the later part of the nineteenth century and up to World War I, passports were not required, on the whole, for travel within Europe, and crossing a border was a relatively straightforward procedure. Consequently, comparatively few people held passports.