Math Is Fun Forum

You are not logged in.

- Topics: Active | Unanswered

#26 Jokes » Oatmeal Jokes - I » 2026-03-18 00:22:57

- Jai Ganesh

- Replies: 0

Q: What do you get when you cross oatmeal & ducks?

A: Quacker oatmeal!

* * *

Q: How did Reese eat her oatmeal?

A: Witherspoon.

* * *

Q: Why did the boy give a girl an oatmeal cookie?

A: To make a sweet first impression.

* * *

Q: What did the kid say when his mother poured oatmeal on him?

A: How can you be so gruel?

* * *

Q: Why doesn't Jay-Z eat oatmeal?

A: Cause He's got 99 problems but fiber ain't one.

* * *

#27 Re: Dark Discussions at Cafe Infinity » crème de la crème » 2026-03-18 00:14:54

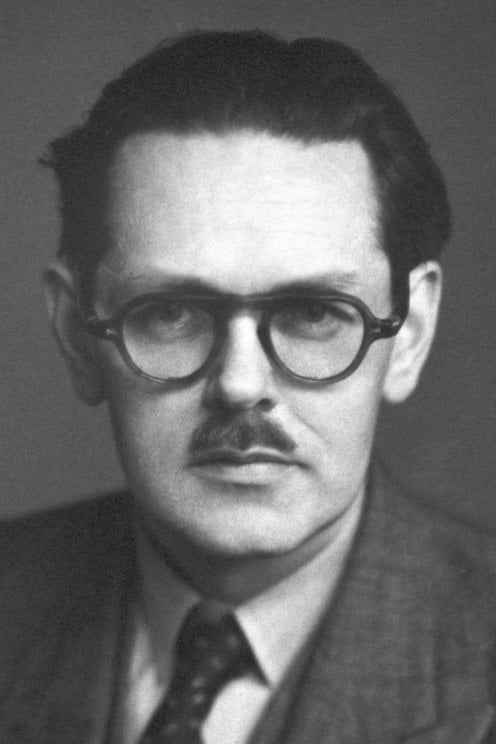

2462) Selman Waksman

Gist:

Work

After Robert Koch discovered that tuberculosis is caused by a bacterium, the hunt for a cure began. In 1939 Selman Waksman and colleagues began systematic studies of how microorganisms in soil affect tubercle bacteria. They found that their growth was impeded by another bacterium, Streptomyces grisues. In 1943 Waksman's colleague, Albert Schatz, isolated streptomycin from this bacterium, which proved an effective medicine against tuberculosis.

Summary

Selman Abraham Waksman (born July 22, 1888, Priluka, Ukraine, Russian Empire [now Pryluky, Ukraine]—died August 16, 1973, Hyannis, Massachusetts, U.S.) was a Ukrainian-born American biochemist who was one of the world’s foremost authorities on soil microbiology. After the discovery of penicillin, he played a major role in initiating a calculated, systematic search for antibiotics among microbes. His screening methods and consequent co-discovery of the antibiotic streptomycin, the first specific agent effective in the treatment of tuberculosis, brought him the 1952 Nobel Prize for Physiology or Medicine.

A naturalized U.S. citizen (1916), Waksman spent most of his career at Rutgers University, New Brunswick, New Jersey, where he served as professor of soil microbiology (1930–40), professor of microbiology and chairman of the department (1940–58), and director of the Rutgers Institute of Microbiology (1949–58). During his extensive study of the actinomycetes (filamentous, bacteria-like microorganisms found in the soil), he extracted from them antibiotics (a term he coined in 1941) valuable for their killing effect not only on gram-positive bacteria, such as the tubercle bacillus (Mycobacterium tuberculosis, which unlike other gram-positive microbes is insensitive to penicillin), but also on gram-negative bacteria, such as the organisms that cause cholera (Vibrio cholerae) and typhoid fever (Salmonella typhi).

In 1940 Waksman, along with his graduate student H. Boyd Woodruff, isolated actinomycin from soil bacteria. Although the substance was effective against strains of gram-negative and gram-positive bacteria, including M. tuberculosis, it was extremely toxic when given to test animals. Four years later Waksman and graduate students Albert Schatz and Elizabeth Bugie published a paper describing their discovery of the relatively nontoxic streptomycin, which they extracted from the actinomycete Streptomyces griseus. They found that the antibiotic exercised repressive influence on tuberculosis. In combination with other chemotherapeutic agents, streptomycin has become a major factor in controlling the disease. Waksman also isolated and developed several other antibiotics, including neomycin, that have been used in treating many infectious diseases of humans, domestic animals, and plants.

Among Waksman’s books are Principles of Soil Microbiology (1927), regarded as one of the most exhaustive works on the subject, and My Life with the Microbes (1954), an autobiography.

Details

Selman Abraham Waksman (July 22, 1888 – August 16, 1973) was a Russian-born American inventor, biochemist and microbiologist, whose research into the decomposition of organisms that live in soil enabled the discovery of streptomycin and several other antibiotics. For his work he won the 1952 Nobel Prize in Physiology or Medicine.

Waksman emigrated to the United States in 1910 and became a naturalized U.S. citizen in 1916. A professor of biochemistry and microbiology at Rutgers University for four decades, he discovered several antibiotics (and introduced the modern sense of that word to name them), and he introduced procedures that have led to the development of many others. The proceeds earned from the licensing of his patents funded a foundation for microbiological research, which established the Waksman Institute of Microbiology located at the Rutgers University Busch Campus in Piscataway, New Jersey (USA). After receiving the Nobel Prize, Waksman and his foundation later were sued by Albert Schatz, one of his Ph.D. students and the discoverer of streptomycin, for minimizing Schatz's role in the discovery.

In 2005, Waksman was granted an ACS National Historic Chemical Landmark in recognition of the significant work of his lab in isolating more than 15 antibiotics, including streptomycin, which was the first effective treatment for tuberculosis.

Early life and education

Selman Waksman was born on July 22 [O.S. July 8] 1888, to Jewish parents, in Nova Pryluka, Kiev Governorate, Russian Empire, now Vinnytsia Oblast, Ukraine. He was the son of Fradia (London) and Jacob Waksman. In 1910, shortly after receiving his diploma from the Fifth Gymnasium in Odessa, he immigrated to the United States and became a naturalized American citizen in 1916.

Waksman attended Rutgers College (now Rutgers University), where he graduated in 1915 with a Bachelor of Science in agriculture. He continued his studies at Rutgers, receiving a Master of Science the following year, in 1916. During his graduate study, he worked under J. G. Lipman at the New Jersey Agricultural Experiment Station at Rutgers performing research in soil bacteriology. Waksman spent some months in 1915–1916 at the United States Department of Agriculture in Washington, DC under Charles Thom, studying soil fungi. He was appointed as a research fellow at the University of California, Berkeley, and in 1918 he was awarded his doctor of philosophy in biochemistry.

Career

He joined the faculty at Rutgers University in the Department of Biochemistry and Microbiology.

At Rutgers, Waksman's team discovered several antibiotics, including actinomycin, clavacin, streptothricin, streptomycin, grisein, neomycin, fradicin, candicidin, candidin. Waksman co-discovered streptomycin with Albert Schatz. Streptomycin was the first effective drug against gram-negative bacteria and the first antibiotic used to cure tuberculosis. Waksman is credited with coining the term antibiotics to describe antibacterials derived from other living organisms, for example penicillin, though the term was used by the French dermatologist François Henri Hallopeau, in 1871 to describe a substance opposed to the development of life.

In 1931, Waksman organized the division of Marine Bacteriology at the Woods Hole Oceanographic Institution (WHOI) in addition to his task at Rutgers. He was appointed a marine bacteriologist there and served until 1942. He was elected a trustee at WHOI and finally a Life Trustee.

In 1951, using half of his patent royalties, Waksman created the Waksman Foundation for Microbiology. At a meeting of the board of trustees of the foundation, held in July 1951, he urged the building of a facility for work in microbiology, named the Waksman Institute of Microbiology, which is located on the Busch Campus of Rutgers University in Piscataway, New Jersey. The foundation's first president, Waksman, was succeeded in this position by his son, Byron H. Waksman, from 1970 to 2000.

#28 This is Cool » Nitrous Oxide » 2026-03-18 00:04:50

- Jai Ganesh

- Replies: 0

Nitrous Oxide

Gist

Nitrous oxide (N2O), commonly known as "laughing gas," is a colorless, slightly sweet-smelling non-flammable gas used extensively in medicine for sedation and pain relief, in dentistry, and in food production (whipped cream chargers). It is a powerful greenhouse gas and ozone-depleting substance.

Nitrous oxide has a pain-relieving and numbing effect, which is why it can be used as an anesthetic. It is also used in the chemicals industry and in farming. Nitrous oxide is absorbed into the blood through the lungs. It then enters the brain and nerve tissue through the bloodstream.

Summary

Nitrous oxide (dinitrogen oxide or dinitrogen monoxide), commonly known as laughing gas or nitrous, among others, is a chemical compound, an oxide of nitrogen with the formula N2O. At room temperature, it is a colourless non-flammable gas, and has a slightly sweet scent and taste. At elevated temperatures, nitrous oxide is a powerful oxidiser similar to molecular oxygen.

Nitrous oxide has significant medical uses, especially in surgery and dentistry, for its anaesthetic and pain-reducing effects, and it is on the World Health Organization's List of Essential Medicines. Its colloquial name, "laughing gas", coined by Humphry Davy, describes the euphoric effects upon inhaling it, which cause it to be used as a recreational drug inducing a brief "high". When abused chronically, it may cause neurological damage through inactivation of vitamin B12. It is also used as an oxidiser in rocket propellants and motor racing fuels, and as a frothing gas for whipped cream.

Nitrous oxide is also an atmospheric pollutant, with a concentration of 333 parts per billion (ppb) in 2020, increasing at 1 ppb annually. It is a major scavenger of stratospheric ozone, with an impact comparable to that of CFCs. About 40% of human-caused emissions are from agriculture, as nitrogen fertilisers are digested into nitrous oxide by soil micro-organisms. As the third most important greenhouse gas, nitrous oxide substantially contributes to global warming. Reduction of emissions is an important goal in the politics of climate change.

Details

Nitrous oxide (laughing gas) is a sedative healthcare providers use to keep you comfortable during procedures. It’s a colorless, faintly sweet-smelling gas that you breathe in through a nosepiece. Unlike other sedation options, you can drive shortly after receiving nitrous oxide.

Overview:

What is nitrous oxide (laughing gas)?

Nitrous oxide (N20) — commonly known as laughing gas — is a type of short-acting sedative. It’s a colorless, slightly sweet-smelling gas that you breathe in through a mask or nosepiece.

Physicians and dentists have been using nitrous oxide since the mid-19th century — and it’s still one of the most common inhaled sedatives used today. It’s fast-acting and it wears off quickly, making it an ideal sedation option for short or minor procedures.

What does laughing gas do?

Nitrous oxide slows down your nervous system and induces a sense of calm and euphoria. It reduces anxiety and helps you stay comfortable during medical or dental procedures. It doesn’t fully put you to sleep, so you’ll still be able to respond to your provider’s questions or instructions.

Despite its name, laughing gas might not make you laugh. (But then again, it could.) Everyone responds a little differently.

Nitrous oxide takes effect quickly. Within three to five minutes, you might feel:

* Calm.

* Relaxed.

* Happy.

* Giggly.

* Mildly euphoric.

* Light-headed.

* Tingling in your arms and legs.

* Heaviness, like you’re sinking deeper into the exam chair or table.

Who shouldn’t use nitrous oxide sedation?

Laughing gas is a safe medical and dental sedation option for most people, from children to adults. But it might not be right for kids under the age of 2 and those with:

* Certain respiratory conditions, like chronic obstructive pulmonary disease (COPD).

* Stuffy nose (nasal congestion).

* Vitamin B12 deficiency.

* Severe psychiatric conditions.

Ask your healthcare provider whether you’re a candidate for nitrous oxide sedation.

Treatment Details:

What should I expect if I’m getting laughing gas?

Your healthcare provider will talk with you and answer any questions before your procedure. They’ll ask you to sign a consent form so you can receive nitrous oxide.

When it’s time for your procedure, your provider will:

* Place a mask over your nose and mouth. (If you’re getting laughing gas at your dentist’s office, they’ll give you a smaller mask that only covers your nose.)

* Open a tank valve to allow nitrous oxide and oxygen to flow into your mask. (They’ll start with a very low dose to see how you respond.)

* Adjust the dosage until you feel the desired effects.

* Do your procedure. (In many cases, your provider will also give you local anesthesia before beginning. This is because nitrous oxide reduces pain but won’t totally eliminate it. So, it’s common to combine it with other forms of anesthesia.)

* Stop the flow of laughing gas once your procedure is over.

* Ask you to breathe in pure oxygen through your mask until you feel alert again.

* Remove the mask from your face.

* Monitor you for a few minutes before releasing you to go home.

It’s normal to feel a little nervous if you’ve never had laughing gas before. The good news is that you’ll be able to tell your provider if you develop undesirable side effects. If you start to feel dizzy or nauseous, your provider can simply adjust the dosage until it feels comfortable to you.

How long does laughing gas last?

The effects of nitrous oxide last until your provider turns off the gas flow. Once this happens, it takes about 5 to 10 minutes for the sedative to leave your system and for your headspace to return to normal. Due to the short-acting nature of nitrous oxide, you can drive shortly after your procedure.

Risks / Benefits:

What are the benefits of nitrous oxide?

The most notable advantage of laughing gas is that it relieves anxiety. People with medical- or dental-related fears often avoid healthcare visits and put off necessary procedures. Nitrous oxide makes it possible for people to get the care they need and deserve.

Nitrous oxide is also:

* Fast-acting (the effects kick in quickly).

* Short-acting. (Once your provider turns off the gas flow, you’ll start feeling like your usual self in a matter of minutes. This can be helpful if you find the effects of nitrous oxide unpleasant.)

* Easy to administer and doesn’t require needles.

* Safe and effective when given in a healthcare setting.

What are the possible complications of nitrous oxide in a dental or medical setting?

Laughing gas doesn’t cause any long-term complications when given under the care of a healthcare provider. But frequent nitrous oxide exposure (for multiple-phase dental treatment, for instance) can result in vitamin B12 deficiency. If you’re planning several appointments with laughing gas, ask your provider whether you should take a vitamin B12 supplement.

Some people may develop temporary nitrous oxide side effects like:

* Headaches.

* Nausea and vomiting.

* Agitation.

These side effects go away once the nitrous oxide leaves your system.

What are the risks of using nitrous oxide recreationally?

Some people use nitrous oxide recreationally to achieve a momentary euphoric high. But inhaling laughing gas more often than you need it can cause serious and potentially life-threatening health complications like:

* Low blood pressure (hypotension).

* Low oxygen (hypoxia).

* Fainting.

* Heart attack.

* Nerve damage.

People who use laughing gas recreationally have an increased risk of these long-term health conditions:

* Depression.

* Psychosis.

* Memory loss.

* Muscle spasms.

* Tinnitus (ringing in your ears).

* Numbness, especially in your hands and feet.

* Weakened immune system.

* Birth defects (if used during pregnancy).

Additional Information

Nitrous oxide (N2O) is any one of several oxides of nitrogen, a colourless gas with pleasant, sweetish odour and taste, which when inhaled produces insensibility to pain preceded by mild hysteria, sometimes laughter. (Because inhalation of small amounts provides a brief euphoric effect and nitrous oxide is not illegal to possess, the substance has been used as a recreational drug.) Nitrous oxide was discovered by the English chemist Joseph Priestley in 1772; another English chemist, Humphry Davy, later named it and showed its physiological effect. A principal use of nitrous oxide is as an anesthetic in surgical operations of short duration; prolonged inhalation causes death. The gas is also used as a propellant in food aerosols. In automobile racing, nitrous oxide is injected into an engine’s air intake; the extra oxygen allows the engine to burn more fuel per stroke. It is prepared by the action of zinc on dilute nitric acid, by the action of hydroxylamine hydrochloride (NH2OH·HCl) on sodium nitrite (NaNO2), and, most commonly, by the decomposition of ammonium nitrate (NH4NO3).

#29 Re: This is Cool » Miscellany » 2026-03-18 00:04:18

2525) Sulfur Dioxide

Gist

Sulfur dioxide (SO2) is a colorless, pungent, and toxic gas composed of sulfur and oxygen, primarily produced by burning fossil fuels and volcanic activity. It is a major air pollutant known to cause respiratory issues, such as difficulty breathing and asthma exacerbation. Industrially, it is crucial for manufacturing sulfuric acid and used as a preservative in food and wine.

Sulfur dioxide (SO2) is an industrial chemical used primarily as a precursor for sulfuric acid production, a preservative (especially for dried fruits and wine), a bleaching agent in paper/pulp manufacturing, and a disinfectant. It acts as a reducing agent in chemical processes and a refrigerant in industrial cooling systems.

Summary

Sulfur dioxide (SO2), is an inorganic compound, a heavy, colorless, poisonous gas. It is produced in huge quantities in intermediate steps of sulfuric acid manufacture.

Sulfur dioxide has a pungent, irritating odor, familiar as the smell of a just-struck match. Occurring in nature in volcanic gases and in solution in the waters of some warm springs, sulfur dioxide usually is prepared industrially by the burning in air or oxygen of sulfur or such compounds of sulfur as iron pyrite or copper pyrite. Large quantities of sulfur dioxide are formed in the combustion of sulfur-containing fuels.

Sulfur dioxide pollution carries serious health and environmental risks and is one of the six criteria air pollutants regulated by the U.S. Environmental Protection Agency and other regulatory agencies around the world. In the atmosphere sulfur dioxide can combine with water vapor to form sulfuric acid, a major component of acid rain; in the second half of the 20th century, measures to control acid rain were widely adopted. Most of the sulfur dioxide released into the environment comes from coal-fired power plants and petroleum refineries. Paper pulp manufacturing, cement manufacturing, and metal smelting and processing facilities are other important sources.

Sulfur dioxide is a precursor of the trioxide (SO3) used to make sulfuric acid. In the laboratory the gas may be prepared by reducing sulfuric acid (H2SO4) to sulfurous acid (H2SO3), which decomposes into water and sulfur dioxide, or by treating sulfites (salts of sulfurous acid) with strong acids, such as hydrochloric acid, again forming sulfurous acid.

Details

Sulfur dioxide (SO2) is a pungent, toxic gas that is the primary product of burning elemental sulfur. It exists widely in nature, mostly from volcanic activity and burning fossil fuels. It is found elsewhere in the solar system, as a gas in the atmospheres of Venus and Jupiter’s moon Io and as an ice on the other Galilean moons.

The major use of SO2 is in the manufacture of sulfuric acid (H2SO4), the most-produced chemical worldwide. Elemental sulfur and oxygen react to form SO2, which is catalytically oxidized with additional oxygen to make sulfur trioxide (SO3). The SO3 is mixed with existing H2SO4 to produce oleum (fuming sulfuric acid), which is added to water in a strongly exothermic process to make concentrated H2SO4. This is known as the contact process; it dates to an 1831 patent by British inventor Peregrine Phillips.

In chemical laboratories, it has multiple functions, including as a reducing agent, as a reagent in sulfonylation reactions, and as a low-temperature solvent. SO2 is also used to preserve dried fruits such as raisins and prunes and to prevent spoilage in wine.

The hazard information table shows that SO2 is pretty nasty stuff; but, in addition to its value as a chemical, it has another positive side: Volcanoes that emit the gas can have a beneficial effect on climate change. When SO2 spews into the stratosphere, it reacts photochemically with oxygen to form H2SO4 aerosols, which in turn reflect solar radiation and cool the atmosphere. But, as might be expected, even this has a downside because SO2 and H2SO4 contribute to acid rain.

Additional Information:

What Is Sulfur Dioxide?

Sulfur dioxide (SO2) is a gaseous air pollutant composed of sulfur and oxygen. SO2 forms when sulfur-containing fuel such as coal, petroleum oil, or diesel is burned. Sulfur dioxide gas can also change chemically into sulfate particles in the atmosphere, a major part of fine particle pollution, which can blow hundreds of miles away.

What Are the Health Effects of Sulfur Dioxide Pollution?

Sulfur dioxide causes a range of harmful effects on the lungs:

* Wheezing, shortness of breath and chest tightness and other problems, especially during exercise or physical activity. Rapid breathing during exercise helps SO2 reach the lower respiratory tract, as does breathing through the mouth.

* Long-term exposure at high levels increases respiratory symptoms and reduces the ability of the lungs to function.

* Short exposures to peak levels of SO2 in the air can make it difficult for people with asthma to breathe when they are active outdoors.

* Increased risk of hospital admissions or emergency room visits, especially among children, older adults and people with asthma.

What Are the Sources of Sulfur Dioxide Emissions?

As of 2020, human-made sources in the U.S. emit about 1.8 million short tons of sulfur dioxide per year (down from just over 6 million short tons per year in 2011) mainly from burning fuels. Power plants, commercial and institutional boilers, internal combustion engines, manufacturing, and industrial processes such as petroleum refining and metal processing are the largest sources of emissions, followed by diesel engines in old buses and trucks, locomotives, ships, and off-road equipment such as construction vehicles. Emissions of sulfur dioxide will decline as cleanup of many of these sources continue in future years.

Where Do High SO2 Concentrations Occur?

Coal-fired power plants remain one of the biggest sources of sulfur dioxide in the U.S. Columns of emissions (plumes) such as from chimneys of a coal-fired power plant are moved by wind over long distances before touching down at ground level at far away sites. These plumes could also get trapped at the ground level by unusual weather conditions such as a layer of warmer air occurring higher up in the atmosphere (inversion).

Ports, smelters, and other sources of sulfur dioxide also cause high concentrations of emissions nearby.

People who live and work near these large sources get the highest exposure to SO2.

What Can We Do about it?

SO2 levels have improved over time, thanks to policies requiring cleaner fuels and pollution controls on power plants. The nation achieved major reductions in this pollutant through its successful program to reduce acid rain.

However, it remains a health concern. What’s more, even with pollution controls installed, high levels can occur when a polluting source such as a power plant is starting up or shutting down its operation or if its equipment malfunctions.

Individuals can take steps to protect themselves on days with unhealthy levels of air pollutants and also ask policymakers at all levels of government to continue to require cleanup of air pollution.

#30 Re: Jai Ganesh's Puzzles » General Quiz » 2026-03-17 22:24:15

Hi,

#10797. What does the term in Geography Dew pond mean?

#10798. What does the term in Geography Diapir mean?

#31 Re: Jai Ganesh's Puzzles » English language puzzles » 2026-03-17 22:02:55

Hi,

#6003. What does the adjective odious mean?

#6004. What does the noun oenophile mean?

#32 Re: Jai Ganesh's Puzzles » Doc, Doc! » 2026-03-17 21:53:38

Hi,

#2598. What does the medical term Dyschromatosis universalis hereditaria mean?

#33 Re: Jai Ganesh's Puzzles » 10 second questions » 2026-03-17 21:41:26

Hi,

#9884.

#34 Re: Jai Ganesh's Puzzles » Oral puzzles » 2026-03-17 21:29:40

Hi,

#6377.

#35 Re: Exercises » Compute the solution: » 2026-03-17 21:17:10

Hi,

2738.

#36 Science HQ » Malaria » 2026-03-17 16:00:11

- Jai Ganesh

- Replies: 0

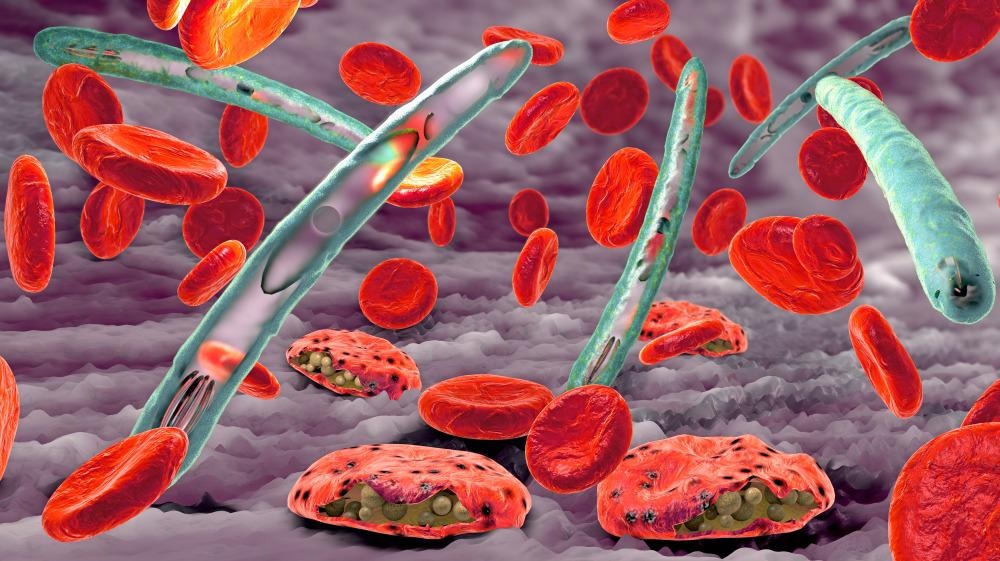

Malaria

Gist

Malaria is a severe, often fatal, disease caused by Plasmodium parasites transmitted through the bites of infected female Anopheles mosquitoes, primarily in tropical regions. Symptoms include high fever, shivering chills, headache, and fatigue. It is preventable and curable, but requires prompt diagnosis and treatment.

Malaria is caused by single-celled Plasmodium parasites, which are transmitted to humans through the bite of an infected female Anopheles mosquito. When an infected mosquito bites a person, the parasites enter the bloodstream, travel to the liver to mature, and subsequently infect red blood cells, causing symptoms.

Summary

Malaria is a mosquito-borne infectious disease which is transmitted by the bite of Anopheles mosquitoes. Human malaria causes symptoms that typically include fever, fatigue, vomiting, and headaches; in severe cases, it can cause jaundice, seizures, coma, or death. Symptoms usually begin 10 to 15 days after being bitten by an infected Anopheles mosquito. If not properly treated, people may have recurrences of the disease months later. Those who survive an infection develop partial immunity, being susceptible to reinfection although with milder symptoms. This partial resistance disappears over months to years if the person has no continuing exposure to malaria.

Malaria is caused by single-celled parasites of the genus Plasmodium, generally spread through the bites of an infected female Anopheles mosquito. The mosquito bite introduces the parasites from the mosquito's saliva into the blood. The parasites initially reproduce and mature in the liver without causing symptoms. After a few days the mature parasites spread into the bloodstream, where they infect and destroy red blood cells, causing the symptoms of infection. Five species of Plasmodium commonly infect humans. The three species associated with more severe cases are P. falciparum (which is responsible for the vast majority of malaria deaths), P. vivax, and P. knowlesi (a simian malaria that spills over into thousands of people a year). P. ovale and P. malariae generally cause a milder form of malaria. Malaria is typically diagnosed by the microscopic examination of blood using blood films, or with antigen-based rapid diagnostic tests. Methods that use the polymerase chain reaction to detect the parasite's DNA have been developed, but they are not widely used in areas where malaria is common, due to their cost and complexity.

The risk of disease can be reduced by preventing mosquito bites through the use of mosquito nets and insect repellents or with mosquito-control measures such as spraying insecticides and draining standing water. Several prophylactic medications are available to prevent malaria in areas where the disease is common. As of 2023, two malaria vaccines have been endorsed by the World Health Organization. Resistance among the parasites has developed to several antimalarial medications; for example, chloroquine-resistant P. falciparum has spread to most malaria-prone areas, and resistance to artemisinin has become a problem in some parts of Southeast Asia. Because of this, drug treatment for malaria infection should be tailored to best fit the Plasmodium species involved and the geographical location where the infection was acquired.

The disease is widespread in the tropical and subtropical regions that exist in a broad band around the equator. This includes much of sub-Saharan Africa, Asia, and Latin America. In 2023, some 263 million cases of malaria worldwide resulted in an estimated 597,000 deaths. Around 95% of the cases and deaths occurred in sub-Saharan Africa. Malaria is commonly associated with poverty and has a significant negative effect on economic development; in Africa, it is estimated to result in economic losses (estimated at US$12 billion a year in 2005) due to increased healthcare costs, lost ability to work, and adverse effects on tourism.

Details

Malaria is a potentially life-threatening illness caused by parasites. You get it through the bite of an infected mosquito. It’s most common in parts of the world that are hot and humid, like Africa and parts of Asia. It can cause flu-like symptoms that can progress to severe illness if not treated.

What Is Malaria?

Malaria is a disease you get from being bitten by a mosquito. Some mosquitoes carry tiny parasites. They can infect you with the parasites when they bite you. Malaria can cause severe illness and death if left untreated. Kids under 5 are more likely to get life-threatening illness. Malaria is most common in tropical areas where it’s hot and humid. Most cases happen in Africa. It’s rare in the U.S. See a healthcare provider right away if you live in or have traveled to an area where malaria spreads and you have symptoms. This is true even if you’ve taken medications to prevent malaria during your trip.

Symptoms and Causes:

Symptoms of malaria

Symptoms of malaria can be mild to severe. They often start as flu-like symptoms and can get worse. They include:

* Fever

* Chills

* Headache

* Muscle aches

* Fatigue

* Difficulty breathing

* Diarrhea, nausea or vomiting

* Seizures

* Yellowing of your skin or whites of your eyes (jaundice)

* Dark or bloody pee

Malaria symptoms usually appear several days to a month after you’re infected. Some people don’t feel sick for a year or longer after the mosquito bite.

Malaria causes

Plasmodium parasites cause malaria. There are five types that can infect humans. Plasmodium falciparum (P. falciparum) and Plasmodium vivax (P. vivax) are the most common. P. falciparum often causes severe illness.

How do you get malaria?

A mosquito gets the parasitic infection when it bites someone who’s infected. The mosquito then bites you and spreads the infection. The parasite multiplies in your liver and spreads to your bloodstream. There, it can spread to other people if a mosquito bites you. Rarely, malaria can also spread through:

* Pregnancy or childbirth (vertical transmission)

* Blood transfusions

* Organ donations

* Sharing needles

Risk factors

You’re at a higher risk of getting malaria if you live in or travel to areas where it spreads, like parts of Africa. You’re at a higher risk of serious illness and death if you:

* Are younger than 5

* Are pregnant

* Have a weakened immune system

* Don’t have access to healthcare

What countries have malaria?

Malaria is most common in areas with warm temperatures and high humidity, including:

* Africa

* Central and South America

* Dominican Republic, Haiti and other areas in the Caribbean

* Islands in the Central and South Pacific Ocean (Oceania)

* South and Southeast Asia

Complications of malaria

If left untreated, malaria can cause severe complications. These include:

* Blocked blood vessels in your brain (cerebral malaria)

* Coma

* Organ failure

These complications can be fatal.

Diagnosis and Tests:

How doctors diagnose malaria

Healthcare providers test a sample of your blood to diagnose malaria. They’ll look for Plasmodium parasites and identify what type of infection you have. Your provider will use this information to determine the right treatment. It’s important to tell your provider if you’ve traveled within the past year so they know to test for malaria.

Management and Treatment:

How is malaria treated?

Healthcare providers treat malaria with medications that kill the parasite (antimalarial medications). The type of antimalarial medication your provider treats you with depends on:

* Where you were infected — you’re more likely to get drug-resistant infections in certain parts of the world

* The type of Plasmodium causing the infection

* How sick you are

* Whether you’re pregnant or breastfeeding

* Your age

Medications can cure malaria, but it’s important to start treating it as soon as possible.

Antimalarial drugs

Antimalarial medications kill the Plasmodium parasites. You might receive them in an IV or take them by mouth. They include:

* Artemether-lumefantrine

* Atovaquone-proguanil

* Chloroquine or hydroxychloroquine

* Doxycycline, tetracycline or clindamycin

* Mefloquine

* Quinine

* Primaquine

* Tafenoquine

You might receive one or a combination of these. Take all medications as prescribed, even if you start to feel better.

When should I see my healthcare provider?

See a healthcare provider right away if you’ve traveled to or live in a country where malaria is common and you have symptoms. Early diagnosis gives you the best chance for a full recovery.

Outlook / Prognosis:

What can I expect if I have malaria?

Antimalarial medications can cure malaria, especially if started early. You might need to stay at the hospital, at least to start your treatment. Treatment can last for about two weeks, but you might start feeling better in a few days. Let your provider know if you aren’t feeling better or if your symptoms get worse. Sometimes, even after treatment, malaria infections can come back (recur). This happens when the parasite isn’t completely killed by treatment. It can stay in your body without causing symptoms. You can start having symptoms again years after your initial infection and treatment. Medications like primaquine and tafenoquine make this less likely to happen. You can get malaria again, even if you’ve had it before.

Prevention:

Can you prevent malaria?

There are a few ways to reduce your risk of getting and spreading malaria:

* Preventive medications: If you’re traveling to an area where malaria is common, your healthcare provider might prescribe antimalarial medications for you to take before, during and after your stay. If you get malaria while on an antimalarial drug, providers will give you a different medication to treat it.

* Mosquito bite prevention: Wear bug spray with DEET, cover as much skin as possible with clothing, sleep under mosquito netting and take other precautions to avoid getting bitten.

* Vaccination: Public health officials recommend vaccinating against malaria for children who live in areas where infections are common. They’re not currently recommended for travelers.

Additional Information:

Overview

Malaria is a disease caused by a parasite. The parasite is spread to humans through the bites of infected mosquitoes. People who have malaria usually feel very sick with a high fever and shaking chills.

While the disease is uncommon in temperate climates, malaria is still common in tropical and subtropical countries. Each year nearly 290 million people are infected with malaria, and more than 400,000 people die of the disease.

To reduce malaria infections, world health programs distribute preventive drugs and insecticide-treated bed nets to protect people from mosquito bites. The World Health Organization has recommended a malaria vaccine for use in children who live in countries with high numbers of malaria cases.

Protective clothing, bed nets and insecticides can protect you while traveling. You also can take preventive medicine before, during and after a trip to a high-risk area. Many malaria parasites have developed resistance to common drugs used to treat the disease.

Symptoms

Signs and symptoms of malaria may include:

* Fever

* Chills

* General feeling of discomfort

* Headache

* Nausea and vomiting

* Diarrhea

* Abdominal pain

* Muscle or joint pain

* Fatigue

* Rapid breathing

* Rapid heart rate

* Cough

Some people who have malaria experience cycles of malaria "attacks." An attack usually starts with shivering and chills, followed by a high fever, followed by sweating and a return to normal temperature.

Malaria signs and symptoms typically begin within a few weeks after being bitten by an infected mosquito. However, some types of malaria parasites can lie dormant in your body for up to a year.

When to see a doctor

Talk to your doctor if you experience a fever while living in or after traveling to a high-risk malaria region. If you have severe symptoms, seek emergency medical attention.

Complications

Malaria can be fatal, particularly when caused by the plasmodium species common in Africa. The World Health Organization estimates that about 94% of all malaria deaths occur in Africa — most commonly in children under the age of 5.

Malaria deaths are usually related to one or more serious complications, including:

* Cerebral malaria. If parasite-filled blood cells block small blood vessels to your brain (cerebral malaria), swelling of your brain or brain damage may occur. Cerebral malaria may cause seizures and coma.

* Breathing problems. Accumulated fluid in your lungs (pulmonary edema) can make it difficult to breathe.

* Organ failure. Malaria can damage the kidneys or liver or cause the spleen to rupture. Any of these conditions can be life-threatening.

* Anemia. Malaria may result in not having enough red blood cells for an adequate supply of oxygen to your body's tissues (anemia).

* Low blood sugar. Severe forms of malaria can cause low blood sugar (hypoglycemia), as can quinine — a common medication used to combat malaria. Very low blood sugar can result in coma or death.

Malaria may recur

Some varieties of the malaria parasite, which typically cause milder forms of the disease, can persist for years and cause relapses.

Prevention

If you live in or are traveling to an area where malaria is common, take steps to avoid mosquito bites. Mosquitoes are most active between dusk and dawn. To protect yourself from mosquito bites, you should:

* Cover your skin. Wear pants and long-sleeved shirts. Tuck in your shirt, and tuck pant legs into socks.

* Apply insect repellent to skin. Use an insect repellent registered with the Environmental Protection Agency on any exposed skin. These include repellents that contain DEET, picaridin, IR3535, oil of lemon eucalyptus (OLE), para-menthane-3,8-diol (PMD) or 2-undecanone. Do not use a spray directly on your face. Do not use products with oil of lemon eucalyptus (OLE) or p-Menthane-3,8-diol (PMD) on children under age 3.

* Apply repellent to clothing. Sprays containing permethrin are safe to apply to clothing.

* Sleep under a net. Bed nets, particularly those treated with insecticides, such as permethrin, help prevent mosquito bites while you are sleeping.

Preventive medicine

If you'll be traveling to a location where malaria is common, talk to your doctor a few months ahead of time about whether you should take drugs before, during and after your trip to help protect you from malaria parasites.

In general, the drugs taken to prevent malaria are the same drugs used to treat the disease. What drug you take depends on where and how long you are traveling and your own health.

Vaccine

The World Health Organization has recommended a malaria vaccine for use in children who live in countries with high numbers of malaria cases.

Researchers are continuing to develop and study malaria vaccines to prevent infection.

#37 Re: This is Cool » Miscellany » 2026-03-17 00:04:13

2524) Microchip

Gist

Microchips (integrated circuits) are tiny silicon devices containing millions or billions of transistors that process, store, and manage data within electronics. They act as the "brains" or "nervous system" for devices ranging from smartphones and computers to cars, medical devices, and household appliances.

Microchips, also known as integrated circuits or computer chips, are the tiny, powerful devices at the heart of nearly every device we use today. These chips are essential building blocks in all modern technology: from smartphones and computers to cars and medical devices.

Summary

Microchips, those unassuming slivers of silicon, are more than just components; they are the linchpins of modern technology. Fundamentally, a microchip, or an integrated circuit, is a convergence of numerous electronic elements—transistors, resistors, capacitors—meticulously integrated onto a tiny silicon chip. This integration represents more than just a triumph in making things smaller; it stands as a powerful demonstration of human creativity and skill in the field of electronics.

The true value of microchips lies not in their physical form but in their pervasive influence across various technological domains. From the smartphone in your pocket to the satellites orbiting our planet, microchips are the unsung heroes, silently orchestrating the digital symphony that underpins our daily lives.

As we delve into the workings of microchips, this article aims to transcend the fleeting trends of the tech world, focusing instead on the enduring principles that make microchips a cornerstone of innovation. We'll explore their fundamental concepts, steering clear of ephemeral specifics, to appreciate their lasting impact on technology.

Details

A microchip is an electronic device made of a small, flat piece of semiconductor material modified with other dopants, oxides, and metals to create electronic components, including transistors, diodes, resistors, and capacitors connected in a circuit.

Microchips are also called:

* Integrated circuits (ICs)

* Computer chips

* Semiconductors

* Chips

Integrated circuits have replaced assemblies of discrete components attached by wires or printed circuit boards (PCBs) because they are a single monolithic device that is much smaller, uses much less power, and can be mass produced at a significantly lower cost.

Semiconductor materials were discovered in 1821 by Thomas Johann Seebeck, and the first working semiconductor transistors were created by Willam Shockley in 1947. The components and all their interconnects were then combined into a single device in 1959 by Robert Noyce. The key to this invention and all that followed was the planar manufacturing process, which used photolithography to deposit and remove materials one layer at a time in a precise manner.

Integrated circuits are an integral part of modern life, providing electronics for devices ranging from toys to deep space probes. In 2023, the worldwide revenue from microchip sales was $526.9 billion. That year’s sales also saw further growth in chip usage beyond computers: 32% were for communication, 17% for automotive applications, 14% for industrial devices, 11% for consumer electronics, and only 25% for computing.

Driven by Moore’s Law, which states that the number of transistors in an IC will double every two years, the growing complexity of the circuits and the ever-decreasing size of components make designing and manufacturing microchips more challenging with each generation of chips.

The general size of individual elements on a chip, referred to as feature size, is measured in nanometers (nm), or one-billionth of a meter. Current semiconductor manufacturers use 14-nm, 10-nm, 7-nm, 5-nm, and 3-nm processes, with 2-nm technologies coming online. For scale, a grain of rice is 5 million nanometers long.

In 2023, researchers created a record-breaking microprocessor containing 1.2 trillion transistors. Intel’s line of CPUs in 2024 include more than 100 million transistors on a single chip.

The Elements of a Typical Microchip

Integrated circuits are made from semiconductor material, usually silicon, stacked in overlapping layers. Here are the most common elements in a microchip:

* Silicon substrate: The base pure silicon crystal layer from which the other layers are constructed by removing or depositing other materials or doping the crystal material.

Silicon Wafer

* Layers: Electronic circuits are created on individual layers. Layers are modified with photolithography, etching, and deposition to produce the desired components and interconnections. Some layers also serve as electrical insulators.

* Vias: A conducting zone, usually cylindrical, used to transmit electrical signals between layers.

* Components: The electronic devices that make up the desired circuit. In most ICs, these consist of transistors, capacitors, diodes, resistors, and sometimes inductors.

* Interconnects: Metalized paths on a given layer that conduct electricity between components or to vias.

* Packaging: Once completed, the IC is placed inside an assembly called a semiconductor package that protects and insulates the delicate silicon chip, can connect multiple chips, and provides a way to connect the chip or chips to a larger electronics circuit.

How Microchips Are Manufactured

There are three steps to microchip manufacturing. Each step is highly optimized and automated to minimize cost, ensure quality, and maximize efficiency. Engineers designing ICs need to have a good understanding of the manufacturing process because each step determines the size, shape, and spacing of the components.

Step 1: Wafer Production

Making blank silicon wafers is the first step in semiconductor manufacturing. This process begins by growing a monocrystalline cylindrical ingot, called a boule, of semiconductor material, usually pure silicon. The boule is then sliced into a thin wafer, machined to create a flat surface, chemically etched to remove any damage from the machining, and polished. Electronic wafers are usually 100 to 450 mm in diameter. The most common size is 300 mm across and 755 µm thick.

Step 2: Fabrication

The circuitry, with all its components and interconnects, is created in a semiconductor fabrication facility, usually called a fab. Each layer and the topology of the circuitry is created in a series of highly controlled steps. Robots move wafers from machine to machine in clusters. Most chip fabrication processes follow these steps for each layer:

* Grow a silicon dioxide layer to cover the layer completely (also called passivation).

* Add a photoresist coating.

* Expose the photoresist layer to ultraviolet light in the pattern of the geometry you want to create. The photoresist layer is then developed, and the material exposed to light is removed. This is called photolithography.

* Use chemicals, usually a strong acid, to remove the oxide layer where the photoresist was removed. This is referred to as etching.

* Remove the undeveloped photoresist material.

* If doping is needed for the layer, ion implantation of contaminants into the crystal structure creates the desired semiconductor behavior for transistors and other components.

* For other materials, various forms of chemical or vapor deposition are used to create interconnects, vias, and other components.

Step 3: Packaging

Once each layer has been constructed and the wafer is cleaned and tested, it is cut into individual chips called dies. One or more dies are then attached to a structure through bonding, and the IC is encapsulated in different materials, depending on the application. Some packages contain a single chip, but the current trend is to combine multiple dies in a single package.

Microchip Types and Uses

The types and uses of integrated circuits are growing every year. Early ICs often performed a single function. But as the manufacturing technology and design tools have improved, chips have shifted to being multifunction.

Smartphones are a great example of how multiple types of chips can be combined in a single device for different uses. They contain radio frequency (RF) chips for the 5G radio and GPS, optoelectrical chips for the cameras, LED chips for the display, digital ICs for the processing units, micro-electromechanical systems (MEMS) chips for the accelerometer, and a dozen other integrated circuits to sense, control, and modify a vast number of uses.

The different types of chips can be categorized by the signaling they carry out.

Analog Integrated Circuits

Analog signals carry voltage across a continuous voltage range, not just a high or a low voltage signal. They are used to amplify, filter by frequency, and mix signals. The frequency and power of an analog IC can vary greatly, and higher frequencies and higher powers present significant design challenges.

Common uses for analog ICs include:

* Optical, thermal, and audio sensors

* Power management circuits

* Operational amplifiers (op-amps)

* Audio and video signal processing

* Telecommunications, including radio communication and optical signal processing

* RF circuits

* Signal conditioning

* Machine controllers

Digital Integrated Circuits

Digital ICs are logic devices that contain millions or billions of logic gates made of transistors. A signal running at a fixed clock frequency is modified or measured as either high or low, zero or one. By combining different logic devices, very complex calculations can be done with very little power used.

Some of the most common uses for digital ICs include:

* Logic ICs or processors

** Microprocessors

** Microcontrollers

** Application-specific integrated circuits (ASICs)

* Memory chips

* Field programmable gate arrays (FPGAs)

* Digital power management devices

* System-on-a-chip (SoC) devices

* Multi-die chips

Mixed-signal Integrated Circuits

Some integrated circuits combine circuitry to handle analog and digital signals and convert between the two to create mixed-signal integrated circuits. They are used when an analog signal is sensed or created and logical operations are needed to read, create, or modify that signal.

Some of the most common uses for mixed-signal ICs are:

* SoC devices

* Data acquisition chips

* RF CMOS circuits

* Clock/timing ICs

* Switched capacitor circuits

* Electro-optical devices

* MEMS devices

Future Trends in Microchip Technology

The future of microchips looks like the past, with more capabilities in smaller sizes while constantly driving the cost down. Advances in manufacturing will also create new opportunities for better performance and new applications.

Trends that will drive electrical engineering design and simulation in the near future include:

Shift to Fabless Design and Foundries

The industry has shifted over the years to a model where companies can design their own ICs and then outsource the manufacturing to a company that just makes chips. This is called fabless design, and the contract manufacturers are called foundries. This enables companies like Apple and Qualcomm to design innovative new products without the capital investment of building their own fabrication facilities. Engineers must design to the manufacturing processes and standards of the foundry they will use.

Smaller Feature Size

Feature sizes continue to shrink, creating power and signal integrity issues. To stay competitive, electrical engineers need to design using these new capabilities, as well as leveraging simulation and design best practices to avoid issues.

Electronic Device Complexity and Combined Functionality

With time, an increasing number of designers of electronic devices are looking for greater functionality in a single chip. Internet of Things (IoT) devices, new solid-state long-term storage, and GPU chips are examples of integrated circuits that will not only add new features and capabilities in the same chip, but the interaction between those functions will also become more sophisticated. Engineers need design and simulation tools to drive designs in which the industry is pulling the technology. Biomedical electronics like implanted microchips will be another area in which several capabilities are needed on a single chip.

Higher Clock Speeds and Frequencies

Increased performance demands and advances in RF technology are driving up clock speeds for digital ICs and frequencies for analog and mixed-signal chips. Both create issues with signal integrity and power management.

Greater Computer Power With Increased Energy Efficiency

The growth of data centers for high-performance computing to support trends like artificial intelligence, cryptocurrency mining, and IoT applications is driving demand for increased performance for microprocessors. These applications are pushing the industry for improvements in FPGAs, solid-state hard drives, memory, and GPUs, along with all the chips needed to connect everything at increasing data transfer speeds.

More Use Beyond Computing

The trend of increased microchip use in automotive, consumer electronics, and industrial applications will continue. Almost all products will be designed as smart devices with connectivity to broadband, sensors, and computing power — all of which need microchips.

Simulation in the Design of Microchips

The complexity and expense of microchip manufacturing make physically prototyping designs impractical. Instead, engineers use virtual prototyping through simulation to drive their design, verify the performance, and identify and solve problems before production begins. Simulation is also used to design packaging and optimize the semiconductor manufacturing machines that make the chips.

Using simulation for digital microchips begins with verifying the logical functionality of the digital design at an abstract level with RTL design. This includes a first look at power management with PowerArtist™ software. This tool can assess the power needs of a design early in the process and help drive a more power-efficient design.

Once the physical design is laid out, engineers can use RedHawk-SC™ software, the trusted industry leader for power noise and reliability for digital ICs, to assess voltage drop and electromigration in their designs.

On the analog and mixed-signal side of things, Totem™ software can be brought into the process for power integrity and reliability signoff. The industry’s trusted gold standard for electromigration multiphysics, it is certified by all major foundries down to 3 nm. It also works with PathFinder-SC™ software to calculate electrostatic discharge.

Once the design is optimized and verified, packaging engineers can use simulation to optimize the power, signal integrity, and robustness of the full microchip package. RedHawk-SC software is designed to handle large, multichip configurations, including system-in-package designs. Advanced semiconductor packaging uses 2.5D- and 3D-IC approaches to combine and connect multiple dies in the same package, and simulation with RedHawk-SC software is the primary way to verify and optimize the designs.

Once the electrical aspects of the design are resolved, packaging engineers can use tools like Mechanical™software and the Icepak® tool for structural reliability and thermal management.

Additional Information:

What is a microchip?

A microchip -- also called a chip, computer chip or integrated circuit (IC) -- is a unit of integrated circuitry that is manufactured at a microscopic scale using a semiconductor material, such as silicon or, to a lesser degree, germanium. Electronic components, such as transistors and resistors, are etched into the material in layers, along with intricate connections that link the components together and facilitate the flow of electric signals.

Microchip components are so small they're measured in nanometers (nm). Some components are now under 10 nm, making it possible to fit billions of components on a single chip. In 2021, IBM introduced a microchip based on 2 nm technology, smaller than the width of a strand of human DNA. A nanometer is one-billionth of a meter or one-millionth of a millimeter. At that scale, it is possible to fit up to 50 billion transistors on a microchip the size of a fingernail.

How are microchips made?

Microchip manufacturers rely on silicon for their chips because it is abundant, inexpensive and easy to work with. Also, it has proven to be a reliable semiconductor in a variety of devices. However, silicon might be reaching its practical limits as microchip technologies become smaller and more components are squeezed into the microchip in an effort to meet the ever-increasing demands for greater performance and more data. Researchers are actively working on a variety of solutions that they hope will be able to carry electronics into the future.

Microchips typically include the following types of components, which can number into the millions or even billions, depending on the type and function of the microchip:

* Transistors. Transistors are active components that control, generate or amplify electric signals within the circuitry, acting as a switch or gate. Multiple transistors can be combined into a single logic gate that compares input currents and produces a single output according to the specified logic.

* Resistors. Resistors are passive components that limit or regulate the flow of electrical current or that provide a specific voltage for an active device. Resistors control the electric signals that move between transistors.

* Capacitors. Capacitors are passive components that store electricity as an electrostatic field and release electric current. Capacitors are often used along with transistors in dynamic RAM (DRAM) to help maintain stored data.

* Diodes. Diodes are specialized components with two nodes that conduct electric current in one direction only. A diode can permit or block the flow of electric current and can be used for various roles, such as switches, rectifiers, voltage regulators or signal modulators.

What are the types of microchips?

Microchips drive all of today's electronics. Not only do these include computers, but also smartphones, network switches, home appliances, car and aircraft components, televisions and amplifiers, internet of things devices and countless other electronic systems. Microchips generally fall into one of the following two categories:

* Logic. This type of microchip does all the heavy lifting, processing the instructions and data that are fed to the device and subsequently to the chip in that device. The most common and widely used type of logic microchip is the central processing unit (CPU). However, this category also includes more specialized chips, such as graphical processing units (GPUs) and neural net processors.

* Memory. This type of microchip stores data. Data storage is either volatile or non-volatile. volatile memory chips require a constant source of power to retain their data. DRAM is a common example of a volatile memory chip. A non-volatile chip is one that can persist data even if the power supply is disrupted. A good example of non-volatile memory is NAND flash. Volatile memory devices tend to perform much better than non-volatile devices, although a number of efforts are underway to bridge the gap between the two, such as storage class memory.

Although many microchips focus on logic or memory only, other types of chips incorporate both, along with other capabilities. For example, system-on-a-chip (SoC) ICs are now widely used in devices such as smartphones and wearable technology and have begun making headway into the computer market, as evidenced by the Apple silicon series of chips. Another example is the application-specific IC, which can also include logic, memory and other capabilities, much like the SoC chip, except that the ASIC chip is customized for a specific purpose, such as medical equipment or an automotive component.

![]()

#38 Re: Dark Discussions at Cafe Infinity » crème de la crème » 2026-03-17 00:03:41

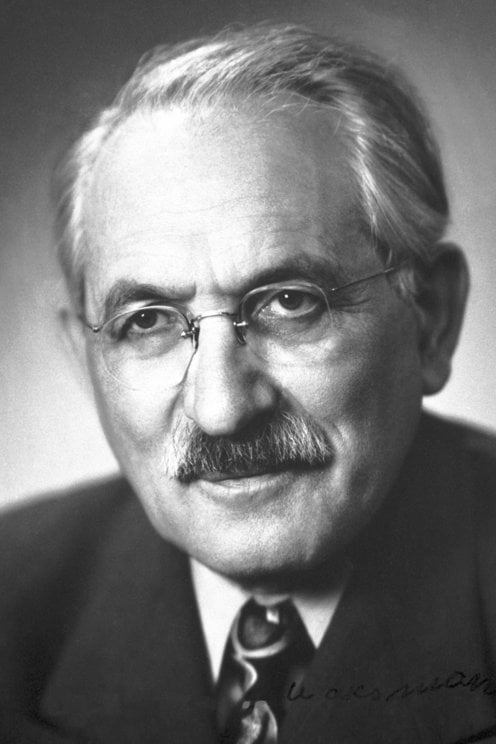

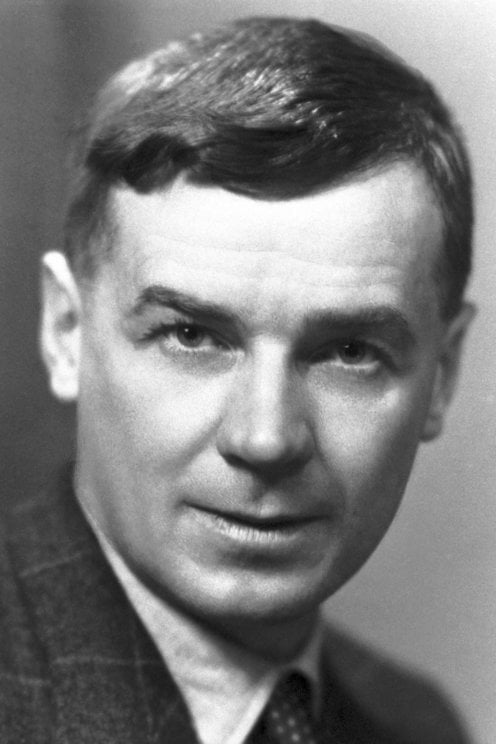

2461) Richard Laurence Millington Synge

Gist:

Work

When a drop of a liquid containing a mixture of various substances is placed on paper, the liquid begins to spread out on the paper. The various substances in the mixture spread at different speeds, however, which gives rise to marks on the paper with different colors. In the 1940s Richard Synge and Archer Martin used this and similar phenomena in gas mixtures, for example, to develop different types of chromatography—methods for separating substances in mixtures and for determining the composition of mixtures.

Summary

R.L.M. Synge (born Oct. 28, 1914, Liverpool, Eng.—died Aug. 18, 1994, Norwich, Norfolk) was a British biochemist who in 1952 shared the Nobel Prize for Chemistry with A.J.P. Martin for their development of partition chromatography, notably paper chromatography.

Synge studied at Winchester College, Cambridge, and received his Ph.D. at Trinity College there in 1941. He spent his entire professional career conducting research, initially with Martin under the auspices of the Wool Industries Research Association, Leeds (1941–43). The two men developed partition chromatography, a technique that is used to separate mixtures of closely related chemicals such as amino acids for identification and further study. Synge used paper chromatography to work out the exact structure of the simple protein molecule gramicidin S, which helped to pave the way for the English biochemist Frederick Sanger’s elucidation of the structure of the insulin molecule.

Synge did research at the Lister Institute of Preventive Medicine, London (1943–48), and at the Rowett Research Institute, near Aberdeen, Scot. (1948–67). He became a biochemist at the Food Research Institute, Norwich (1967–76), and was also an honorary professor of biological sciences at the University of East Anglia (1968–84).

Details

Richard Laurence Millington Synge (28 October 1914 – 18 August 1994) was a British biochemist, and shared the 1952 Nobel Prize in Chemistry for the invention of partition chromatography with Archer Martin.

Life

Richard Laurence Millington Synge was born in West Kirby on 28 October 1914, the son of Lawrence Millington Synge, a Liverpool stock-broker, and his wife, Katherine C. Swan.

Synge was educated at the Old Hall in Wellington, Shropshire and at Winchester College. He then studied Chemistry at Trinity College, Cambridge.

He spent his entire career in research, at the Wool Industries Research Association, Leeds (1941–1943), Lister Institute for Preventive Medicine, London (1943–1948), Rowett Research Institute, Aberdeen (1948–1967), and Food Research Institute, Norwich (1967–1976).

It was during his time in Leeds that he worked with Archer Martin, developing partition chromatography, a technique used in the separation mixtures of similar chemicals, that revolutionised analytical chemistry. Between 1942 and 1948 he studied peptides of the protein group gramicidin, work later used by Frederick Sanger in determining the structure of insulin. In March 1950 he was elected a Fellow of the Royal Society for which his candidature citation read:

Distinguished as a biochemist. Was the first to show the possibility of using counter-current liquid-liquid extraction in the separation of N-acetylamino acids. In collaboration with A.J.P. Martin this led to the development of partition chromatography, which they have applied with conspicuous success in problems related to the composition and structure of proteins, particularly wool keratin. Synge's recent work on the composition and structure of gramicidins is outstanding and illustrates vividly the great advances in technique for which he and Martin are responsible.

— "Library and Archive catalogue". Royal Society. Archived from the original on 27 July 2011. Retrieved 24 October 2010.

In 1963 he was elected a Fellow of the Royal Society of Edinburgh. His proposers were Magnus Pyke, Andrew Phillipson, Sir David Cuthbertson and John Andrew Crichton.

He was for several years the treasurer of the Chemical Information Group of the Royal Society of Chemistry, and was an honorary Professor in Biological Sciences at the University of East Anglia from 1968 to 1984. He was awarded an honorary Doctor of Science (ScD) from the University of East Anglia in 1977, and an honorary doctorate from the Faculty of Mathematics and Science at Uppsala University, Sweden in 1980.

Personal life

In 1943 Synge married Ann Davies Stephen (1916–1997). Ann Stephen was the daughter of psychologist Karin Stephen and psychoanalyst Adrian Stephen. Ann's sister Judith (1918–1972) was married to documentary artist and photographer Nigel Henderson.

#39 Jokes » Nut Jokes - VI » 2026-03-17 00:03:24

- Jai Ganesh

- Replies: 0

Q: What did the boy squirrel say to the girl squirrel?

A: Want these nuts?

* * *

Q: What do you call an animal that solves crimes?

A: Squirrel-lock Holmes.

* * *

Q: What do squirrels drink?

A: Nut-Tea.

* * *

Psychologist: What brings you here today?

Squirrel: I realized I am what I eat.....Nuts.

* * *

#40 Dark Discussions at Cafe Infinity » Comfort Quotes - II » 2026-03-17 00:02:57

- Jai Ganesh

- Replies: 0

Comfort Quotes - II

1. There is nothing like staying at home for real comfort. - Jane Austen

2. The lust for comfort, that stealthy thing that enters the house a guest, and then becomes a host, and then a master. - Khalil Gibran

3. Prosperity is not without many fears and distastes; adversity not without many comforts and hopes. - Francis Bacon

4. Life is made up, not of great sacrifices or duties, but of little things, in which smiles and kindness, and small obligations given habitually, are what preserve the heart and secure comfort. - Humphry Davy

5. In poverty and other misfortunes of life, true friends are a sure refuge. The young they keep out of mischief; to the old they are a comfort and aid in their weakness, and those in the prime of life they incite to noble deeds. - Aristotle

6. A scholar who cherishes the love of comfort is not fit to be deemed a scholar. - Lao Tzu

7. Physical comforts cannot subdue mental suffering, and if we look closely, we can see that those who have many possessions are not necessarily happy. In fact, being wealthy often brings even more anxiety. - Dalai Lama

8. If a nation values anything more than freedom, it will lose its freedom, and the irony of it is that if it is comfort or money that it values more, it will lose that too. - W. Somerset Maugham.

#41 Re: Jai Ganesh's Puzzles » General Quiz » 2026-03-16 18:54:54

Hi,

#10795. What does the term in Geography Desertification mean?

#10796. What does the term in Geography Desire path mean?

#42 Re: Jai Ganesh's Puzzles » English language puzzles » 2026-03-16 18:39:37

Hi,

#6001. What does the adjective horrendous mean?

#6002. What does the noun hornet mean?

#43 Re: Jai Ganesh's Puzzles » Doc, Doc! » 2026-03-16 18:20:09

Hi,

#2597. What does the medical term Hyperplasia mean?

#44 Re: Jai Ganesh's Puzzles » 10 second questions » 2026-03-16 18:08:24

Hi,

#9883.

#45 Re: Jai Ganesh's Puzzles » Oral puzzles » 2026-03-16 17:44:23

Hi,

#6376.

#46 This is Cool » Nitrogen Dioxide » 2026-03-16 17:11:47

- Jai Ganesh

- Replies: 0

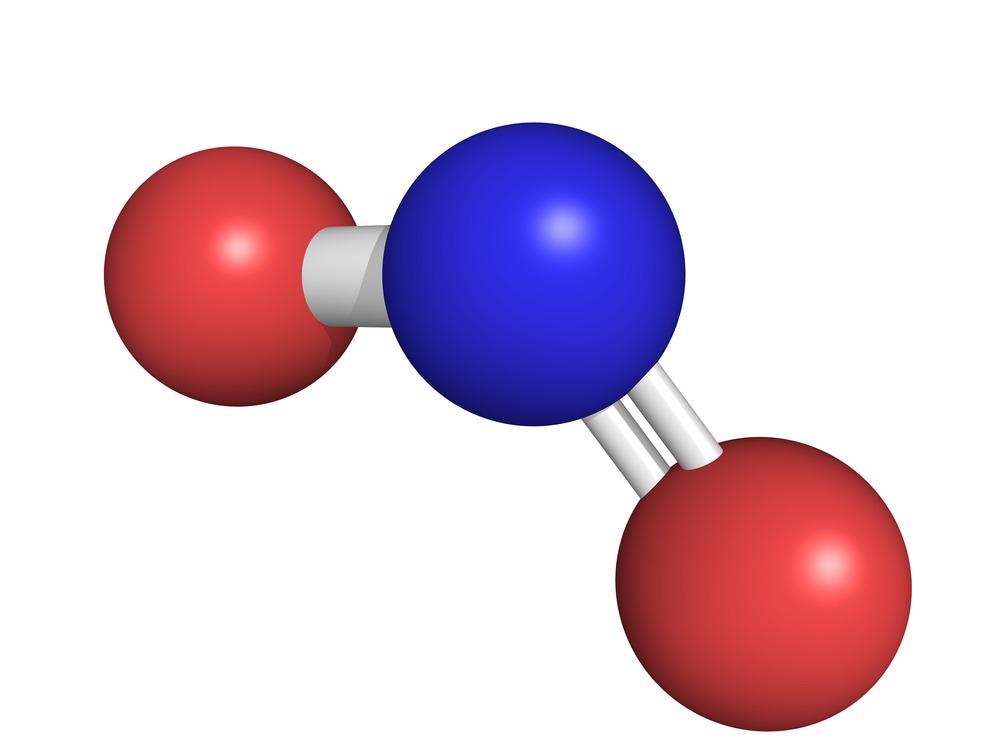

Nitrogen Dioxide

Gist

Nitrogen dioxide is a highly reactive, reddish-brown toxic gas with a pungent odor, acting as a major air pollutant and strong oxidant. Primarily produced by burning fossil fuels in vehicles, power plants, and industrial equipment, it poses severe respiratory health risks, including asthma, and contributes to smog, acid rain, and ozone formation.

NO2 is comprised of one atom of nitrogen and two atoms of oxygen, and is a gas at ambient temperatures. It has a pungent smell, and is brownish red in color.

Summary

Nitrogen dioxide is a chemical compound with the formula NO2. One of several nitrogen oxides, nitrogen dioxide is a reddish-brown gas. It is a paramagnetic, bent molecule with C2v point group symmetry. Industrially, NO2 is an intermediate in the synthesis of nitric acid, millions of tons of which are produced each year, primarily for the production of fertilizers.

Nitrogen dioxide is poisonous and can be fatal if inhaled in large quantities. Cooking with a gas stove produces nitrogen dioxide which causes poorer indoor air quality. Combustion of gas can lead to increased concentrations of nitrogen dioxide throughout the home environment which is linked to respiratory issues and diseases. The LC50 (median lethal dose) for humans has been estimated to be 174 ppm for a 1-hour exposure. It is also included in the NOx family of atmospheric pollutants.

Properties

Nitrogen dioxide is a reddish-brown gas with a pungent, acrid odor above 21.2 °C (70.2 °F; 294.3 K) and becomes a yellowish-brown liquid below 21.2 °C (70.2 °F; 294.3 K). It forms an equilibrium with its dimer, dinitrogen tetroxide (N2O4), and converts almost entirely to N2O4 below −11.2 °C (11.8 °F; 261.9 K).

The bond length between the nitrogen atom and the oxygen atom is 119.7 pm. This bond length is consistent with a bond order between one and two. Nitrogen dioxide is a doublet state.

Details

Nitrogen dioxide, or NO2, is a gaseous air pollutant composed of nitrogen and oxygen and is one of a group of related gases called nitrogen oxides, or NOx. Nitrogen dioxide forms when fossil fuels such as coal, oil, methane gas (natural gas) or diesel are burned at high temperatures. NO2 and other nitrogen oxides in the outdoor air contribute to particle pollution and to the chemical reactions that make ozone. It is one of six widespread air pollutants for which there are national air quality standards to limit their levels in the outdoor air. NO2 can also form indoors when fuels like wood or gas are burned.

What Are the Health Effects of Nitrogen Dioxide Pollution?

Nitrogen dioxide causes a range of harmful effects on the lungs, including:

* Increased inflammation of the airways;

* Worsened cough and wheezing;

* Reduced lung function;

* Increased asthma attacks; and

* Greater likelihood of emergency department and hospital admissions.

Scientific evidence suggests that exposure to NO2 could likely cause asthma in children.

A 2022 review of multiple studies found that elevated levels of NO2, as well as elevated particulate matter and sulfur dioxide, were strongly associated with heart and lung harm, affected pregnancy and birth outcomes, and were likely associated with increased risk of kidney and neurological harm, autoimmune disorders and cancer.

What Are the Sources of Nitrogen Dioxide Emissions?

As of 2020, human-made sources in the U.S. emit 7.64 million short tons of nitrogen oxides per year (down from 15 million short tons per year in 2011) mainly from burning fuels. Trucks, buses, and cars are the largest sources of NO2 emissions, followed by diesel-powered non-road equipment, industrial processes such as oil and gas production, industrial boilers and other movable engines, and coal-fired power plants. Emissions of nitrogen dioxide will decline as cleanup of many of these sources continue in future years.

Where Do High NO2 Concentrations Occur?

Monitors show the highest concentrations of outdoor NO2 in large urban regions such as the Northeast corridor, Chicago and Los Angeles. Levels are higher on or near heavily traveled roadways.

It is important to note that NO2 and other nitrogen oxides are also produced from burning natural gas (methane), both outdoors and indoors. Outdoors, this can include gas-fired power plants and from facilities that extract, process or transport oil and gas if they burn it in flares or to power equipment. Indoors, appliances such as stoves, dryers and space heaters that burn natural gas, liquified petroleum gas (or LPG, which includes propane and butane) and kerosene can produce substantial amounts of nitrogen dioxide. If those appliances are not fully vented to the outside, levels of NO2 can build up to unhealthy levels indoors.

Who Is at Risk?

While everyone is at risk from health impacts of nitrogen dioxide pollution, those that live near the emission sources are at higher risk. Other vulnerable subpopulations at higher risk from nitrogen dioxide exposure include:

* Individuals who are pregnant;

* Infants, children and teens;

* Older adults (>65 years of age);

* People with pre-existing medical conditions such as asthma, chronic obstructive pulmonary disease (COPD), cardiovascular disease, diabetes, lung cancer

* Current or former smokers;

* People with low socioeconomic status; and

* People of color.

What Can We Do about It?

The good news is that for much of the nation, the outdoor air has much lower levels of nitrogen dioxide now than in previous decades. Under the federal Clean Air Act, more protective standards nationwide have helped drive down nitrogen dioxide emissions. Power plants, industrial sites and on-road vehicles are cleaner than they used to be, which has driven nationwide improvement in air quality. However, far too many people still breathe in unhealthy levels of nitrogen dioxide pollution.

Individuals can take steps to protect themselves on days with unhealthy levels of air pollutants and also ask policymakers at all levels of government to continue to require cleanup of air pollution.

Additional Information:

What is Nitrogen dioxide?

NO2 is a highly poisonous gas with the chemical name Nitrogen dioxide.

It is also called Nitrogen (IV) oxide or Deutoxide of nitrogen. It is one of the major atmospheric pollutants that absorb UV light and stops to reach the earth’s surface.

Nitrogen (IV) oxide is a yellowish-brown liquid in its compressed form or reddish-brown gas. Its vapours are heavier when compared to air.

Nitrogen dioxide Sources – NO2

Over 98 percent of man-made N0, emissions result from Combustion with the majority due to stationary sources. Combustion generated oxides of nitrogen are emitted predominantly as nitric oxide, N0, a relatively harmless gas, but one which is rapidly converted in the atmosphere to the toxic nitrogen dioxide.

Nitrogen dioxide is also a precursor in the formation of nitrate serosols and nitrosamines, the health effects of which are under study. Because of the quantity generated and their potential for widespread adverse effects on public health and welfare, nitrogen oxides are among the atmospheric pollutants for which standards and regularly controls have been established both by the U.S. c

NO2 Uses (Nitrogen dioxide)

* Nitrogen dioxide is used as an intermediate in the production of nitric acid.

* Used in the manufacturing of oxidized cellulose compounds.

* Used as a catalyst.

* Used as an intermediate in the production of sulphuric acid.

* Used as an oxidizer for rocket fuels.

* Used as a nitrating agent.

* Used to bleach flour.

* Used as an oxidizing agent.

Health Hazards

Severe exposures of Deutoxide of nitrogen can be fatal. When in contact it causes a burning sensation in the eyes and skin. When in liquid form it causes frostbite. It is reported to react with the blood to form methemoglobin. When heated to decompose, it releases toxic fumes of nitrogen oxides.

Nitrogen dioxide is an irritant gas which causes inflammation of the airways at high concentrations. NO2 mainly affects respiratory conditions which cause high levels of airway inflammation. Long-term exposure will decrease lung capacity, increase the probability of respiratory problems and increase allergy response. NO2 also contributes to the production of small particles (PM) and ozone at ground level, both associated with harmful effects on the environment.

Frequently Asked Questions – FAQs

Q1: What is nitrogen dioxide used for?

A1: Nitrogen Dioxide, NO2; was used as a catalyst in some oxidation reactions; as an inhibitor to prevent the polymerization of acrylates during distillation; as an organic compound nitrating agent; as an oxidizing agent; as a rocket fuel; as a flour bleaching agent.

Q2: What does nitrogen dioxide do?

A2: The key consequence of breathing in elevated nitrogen dioxide levels is an increased risk of respiratory disorders. Nitrogen dioxide inflames the lungs ‘ lining and can decrease immunity to infections of the lungs. This can lead to wheezing, coughing, colds, pneumonia, and bronchitis issues.

Q3: Does nitrogen dioxide cause global warming?

A3: Nitric oxide and nitrogen dioxide are the two most toxic and dangerous nitrogen oxides. Nitrous oxide, often referred to as laughing gas, is a greenhouse gas which contributes to global warming.

Q4: What are the main sources of nitrogen oxides?

A4: Natural causes include volcanoes, rivers, biological collapse and bolts of lightning. Every year human activities add 24 million tons of nitrogen oxides to our atmosphere.

Q5: Is nitrogen dioxide heavier than air?

A5: At high concentrations, nitric oxide is quickly oxidized into the air to produce nitrogen dioxide. Expositions. Nitrogen dioxide is heavier than air, so exposure can result in asphyxiation in poorly ventilated, sealed, or low-lying areas. The gasses at room temperature are both nitrogen dioxide and nitric oxide.

Q6: What is nitrogen dioxide made from?