Math Is Fun Forum

You are not logged in.

- Topics: Active | Unanswered

#1 Re: This is Cool » Miscellany » Today 00:06:38

2522) Automobile

Gist

An automobile is a wheeled, self-propelled motor vehicle designed primarily for transporting passengers on roads, typically featuring four wheels and an engine. Developed in the late 19th century, these vehicles have evolved from early internal combustion models to include modern electric powertrains. There are over 1.6 billion cars in use worldwide, profoundly influencing global transportation and industry.

An automobile is a self-propelled, wheeled motor vehicle primarily designed for transporting people on roads, commonly featuring four wheels and powered by an internal combustion engine or electric motor, though the term also covers vehicles like trucks and motorcycles. Essentially, it's a "self-moving" vehicle, combining the Greek auto (self) and Latin mobilis (movable).

Summary

A car, or an automobile, is a motor vehicle with wheels. Most definitions of cars state that they run primarily on roads, seat 1-8 people, have four wheels, and mainly transport people rather than cargo. There are over 1.6 billion cars in use worldwide as of 2025.

The French inventor Nicolas-Joseph Cugnot built the first steam-powered road vehicle in 1769, while the Swiss inventor François Isaac de Rivaz designed and constructed the first internal combustion-powered automobile in 1808. The modern car—a practical, marketable automobile for everyday use—was invented in 1886, when the German inventor Carl Benz patented his Benz Patent-Motorwagen. Commercial cars became widely available during the 20th century. The 1901 Oldsmobile Curved Dash and the 1908 Ford Model T, both American cars, are widely considered the first mass-produced and mass-affordable cars, respectively. Cars were rapidly adopted in the US, where they replaced horse-drawn carriages. In Europe and other parts of the world, demand for automobiles did not increase until after World War II. In the 21st century, car usage is still increasing rapidly, especially in China, India, and other newly industrialised countries.

Cars have controls for driving, parking, passenger comfort, and a variety of lamps. Over the decades, additional features and controls have been added to vehicles, making them progressively more complex. These include rear-reversing cameras, air conditioning, navigation systems, and in-car entertainment. Most cars in use in the early 2020s are propelled by an internal combustion engine, fueled by the combustion of fossil fuels. Electric cars, which were invented early in the history of the car, became commercially available in the 2000s and widespread in the 2020s. The transition from fossil-fuel-powered cars to electric cars is a central feature of most climate change mitigation scenarios.

There are costs and benefits to car use. The costs to the individual include acquiring the vehicle, interest payments (if the car is financed), repairs and maintenance, fuel, depreciation, driving time, parking fees, taxes, and insurance. The costs to society include resources used to produce cars and fuel, maintaining roads, land-use, road congestion, air pollution, noise pollution, public health, and disposing of the vehicle at the end of its life. Traffic collisions are the largest cause of injury-related deaths worldwide. Personal benefits include on-demand transportation, mobility, independence, and convenience. Societal benefits include economic benefits, such as job and wealth creation from the automotive industry, transportation provision, and societal wellbeing from leisure and travel opportunities. People's ability to move flexibly from place to place has far-reaching implications for society.

Details

An automobile is a usually four-wheeled vehicle designed primarily for passenger transportation and commonly propelled by an internal-combustion engine using a volatile fuel.

Automotive design

The modern automobile is a complex technical system employing subsystems with specific design functions. Some of these consist of thousands of component parts that have evolved from breakthroughs in existing technology or from new technologies such as electronic computers, high-strength plastics, and new alloys of steel and nonferrous metals. Some subsystems have come about as a result of factors such as air pollution, safety legislation, and competition between manufacturers throughout the world.

Passenger cars have emerged as the primary means of family transportation, with an estimated 1.4 billion in operation worldwide. About one-quarter of these are in the United States, where more than three trillion miles (almost five trillion kilometres) are traveled each year. In recent years, Americans have been offered hundreds of different models, about half of them from foreign manufacturers. To capitalize on their proprietary technological advances, manufacturers introduce new designs ever more frequently. With some 70 million new units built each year worldwide, manufacturers have been able to split the market into many very small segments that nonetheless remain profitable.

New technical developments are recognized to be the key to successful competition. Research and development engineers and scientists have been employed by all automobile manufacturers and suppliers to improve the body, chassis, engine, drivetrain, control systems, safety systems, and emission-control systems.

These outstanding technical advancements are not made without economic consequences. According to a study by Ward’s Communications Incorporated, the average cost for a new American car increased $4,700 (in terms of the value of the dollar in 2000) between 1980 and 2001 because of mandated safety and emission-control performance requirements (such as the addition of air bags and catalytic converters). New requirements continued to be implemented in subsequent years. The addition of computer technology was another factor driving up car prices, which increased by 29 percent between 2009 and 2019. This is in addition to the consumer costs associated with engineering improvements in fuel economy, which may be offset by reduced fuel purchases.

Vehicle design depends to a large extent on its intended use. Automobiles for off-road use must be durable, simple systems with high resistance to severe overloads and extremes in operating conditions. Conversely, products that are intended for high-speed, limited-access road systems require more passenger comfort options, increased engine performance, and optimized high-speed handling and vehicle stability. Stability depends principally on the distribution of weight between the front and rear wheels, the height of the centre of gravity and its position relative to the aerodynamic centre of pressure of the vehicle, suspension characteristics, and the selection of which wheels are used for propulsion. Weight distribution depends principally on the location and size of the engine. The common practice of front-mounted engines exploits the stability that is more readily achieved with this layout. The development of aluminum engines and new manufacturing processes has, however, made it possible to locate the engine at the rear without necessarily compromising stability.

Body

Automotive body designs are frequently categorized according to the number of doors, the arrangement of seats, and the roof structure. Automobile roofs are conventionally supported by pillars on each side of the body. Convertible models with retractable fabric tops rely on the pillar at the side of the windshield for upper body strength, as convertible mechanisms and glass areas are essentially nonstructural. Glass areas have been increased for improved visibility and for aesthetic reasons.

The high cost of new factory tools makes it impractical for manufacturers to produce totally new designs every year. Completely new designs usually have been programmed on three- to six-year cycles with generally minor refinements appearing during the cycle. In the past, as many as four years of planning and new tool purchasing were needed for a completely new design. Computer-aided design (CAD), testing by use of computer simulations, and computer-aided manufacturing (CAM) techniques may now be used to reduce this time requirement by 50 percent or more. See machine tool: Computer-aided design and computer-aided manufacturing (CAD/CAM).

Automotive bodies are generally formed out of sheet steel. The steel is alloyed with various elements to improve its ability to be formed into deeper depressions without wrinkling or tearing in manufacturing presses. Steel is used because of its general availability, low cost, and good workability. For certain applications, however, other materials, such as aluminum, fibreglass, and carbon-fibre reinforced plastic, are used because of their special properties. Polyamide, polyester, polystyrene, polypropylene, and ethylene plastics have been formulated for greater toughness, dent resistance, and resistance to brittle deformation. These materials are used for body panels. Tooling for plastic components generally costs less and requires less time to develop than that for steel components and therefore may be changed by designers at a lower cost.

To protect bodies from corrosive elements and to maintain their strength and appearance, special priming and painting processes are used. Bodies are first dipped in cleaning baths to remove oil and other foreign matter. They then go through a succession of dip and spray cycles. Enamel and acrylic lacquer are both in common use. Electrodeposition of the sprayed paint, a process in which the paint spray is given an electrostatic charge and then attracted to the surface by a high voltage, helps assure that an even coat is applied and that hard-to-reach areas are covered. Ovens with conveyor lines are used to speed the drying process in the factory. Galvanized steel with a protective zinc coating and corrosion-resistant stainless steel are used in body areas that are more likely to corrode.

Chassis

In most passenger cars through the middle of the 20th century, a pressed-steel frame—the vehicle’s chassis—formed a skeleton on which the engine, wheels, axle assemblies, transmission, steering mechanism, brakes, and suspension members were mounted. The body was flexibly bolted to the chassis during a manufacturing process typically referred to as body-on-frame construction. This process is used today for heavy-duty vehicles, such as trucks, which benefit from having a strong central frame, subjected to the forces involved in such activities as carrying freight, including the absorption of the movements of the engine and axle that is allowed by the combination of body and frame.

In modern passenger-car designs, the chassis frame and the body are combined into a single structural element. In this arrangement, called unit-body (or unibody) construction, the steel body shell is reinforced with braces that make it rigid enough to resist the forces that are applied to it. Separate frames or partial “stub” frames have been used for some cars to achieve better noise-isolation characteristics. The heavier-gauge steel present in modern component designs also tends to absorb energy during impacts and limit intrusion in accidents.

Engine

A wide range of engines has been used experimentally and in automotive production. The most successful for automobiles has been the gasoline-fueled reciprocating-piston internal-combustion engine, operating on a four-stroke cycle, while diesel engines are widely used for trucks and buses. The gasoline engine was originally selected for automobiles because it could operate more flexibly over a wide range of speeds, and the power developed for a given weight engine was reasonable; it could be produced by economical mass-production methods; and it used a readily available, moderately priced fuel. Reliability, compact size, exhaust emissions, and range of operation later became important factors.

There has been an ongoing reassessment of these priorities with new emphasis on the reduction of greenhouse gases (see greenhouse effect) or pollution-producing characteristics of automotive power systems. This has created new interest in alternate power sources and internal-combustion engine refinements that previously were not close to being economically feasible. Several limited-production battery-powered electric vehicles are marketed today. In the past they had not proved to be competitive, because of costs and operating characteristics. The gasoline engine, with new emission-control devices to improve emission performance, has been challenged in recent years by hybrid power systems that combine gasoline or diesel engines with battery systems and electric motors. Such designs are, however, more complex and therefore more costly.

The evolution of higher-performance engines in the United States led the industry away from long, straight engine cylinder layouts to compact six- and eight-cylinder V-type layouts for larger cars (with horsepower ratings up to about 350). Smaller cars depend on smaller four-cylinder engines. European automobile engines were of a much wider variety, ranging from 1 to 12 cylinders, with corresponding differences in overall size, weight, piston displacement, and cylinder bores. A majority of the models had four cylinders and horsepower ratings up to 120. Most engines had straight or in-line cylinders. There were, however, several V-type models and horizontally opposed two- and four-cylinder makes. Overhead camshafts were frequently employed. The smaller engines were commonly air-cooled and located at the rear of the vehicle; compression ratios were relatively low. Increased interest in improved fuel economy brought a return to smaller V-6 and four-cylinder layouts, with as many as five valves per cylinder to improve efficiency. Variable valve timing to improve performance and lower emissions has been achieved by manufacturers in all parts of the world. Electronic controls automatically select the better of two profiles on the same cam for higher efficiency when engine speeds and loads change.

Fuel

Specially formulated gasoline is essentially the only fuel used for automobile operation, although diesel fuels are used for many trucks and buses and a few automobiles, and compressed liquefied hydrogen has been used experimentally. The most important requirements of a fuel for automobile use are proper volatility, sufficient antiknock quality, and freedom from polluting by-products of combustion. The volatility is reformulated seasonally by refiners so that sufficient gasoline vaporizes, even in extremely cold weather, to permit easy engine starting. Antiknock quality is rated by the octane number of the gasoline. The octane number requirement of an automobile engine depends primarily on the compression ratio of the engine but is also affected by combustion-chamber design, the maintenance condition of engine systems, and chamber-wall deposits. In the 21st century regular gasoline carried an octane rating of 87 and high-test in the neighbourhood of 93.

Automobile manufacturers have lobbied for regulations that require the refinement of cleaner-burning gasolines, which permit emission-control devices to work at higher efficiencies. Such gasoline was first available at some service stations in California, and from 2017 the primary importers and refiners of gasoline throughout the United States were required to remove sulfur particles from fuel to an average level of 10 parts per million (ppm).

Vehicle fleets fueled by natural gas have been in operation for several years. Carbon monoxide and particulate emissions are reduced by 65 to 90 percent. Natural-gas fuel tanks must be four times larger than gasoline tanks for equivalent vehicles to have the same driving range. This compromises cargo capacity.

Ethanol (ethyl alcohol) is often blended with gasoline (15 parts to 85 parts) to raise its octane rating, which results in a smoother-running engine. Ethanol, however, has a lower energy density than gasoline, which results in decreased range per tankful.

Lubrication

All moving parts of an automobile require lubrication. Without it, friction would increase power consumption and damage the parts. The lubricant also serves as a coolant, a noise-reducing cushion, and a sealant between engine piston rings and cylinder walls. The engine lubrication system incorporates a gear-type pump that delivers filtered oil under pressure to a system of drilled passages leading to various bearings. Oil spray also lubricates the cams and valve lifters.

Wheel bearings and universal joints require a fairly stiff grease; other chassis joints require a soft grease that can be injected by pressure guns. Hydraulic transmissions require a special grade of light hydraulic fluid, and manually shifted transmissions use a heavier gear oil similar to that for rear axles to resist heavy loads on the gear teeth. Gears and bearings in lightly loaded components, such as generators and window regulators, are fabricated from self-lubricating plastic materials. Hydraulic fluid is also used in other vehicle systems in conjunction with small electric pumps and motors.

Cooling system

Almost all automobiles employ liquid cooling systems for their engines. A typical automotive cooling system comprises (1) a series of channels cast into the engine block and cylinder head, surrounding the combustion chambers with circulating water or other coolant to carry away excessive heat, (2) a radiator, consisting of many small tubes equipped with a honeycomb of fins to radiate heat rapidly, which receives and cools hot liquid from the engine, (3) a centrifugal-type water pump with which to circulate coolant, (4) a thermostat, which maintains constant temperature by automatically varying the amount of coolant passing into the radiator, and (5) a fan, which draws fresh air through the radiator.

For operation at temperatures below 0 °C (32 °F), it is necessary to prevent the coolant from freezing. This is usually done by adding some compound, such as ethylene glycol, to depress the freezing point of the coolant. By varying the amount of additive, it is possible to protect against freezing of the coolant down to any minimum temperature normally encountered. Coolants contain corrosion inhibitors designed to make it necessary to drain and refill the cooling system only every few years.

Air-cooled cylinders operate at higher, more efficient temperatures, and air cooling offers the important advantage of eliminating not only freezing and boiling of the coolant at temperature extremes but also corrosion damage to the cooling system. Control of engine temperature is more difficult, however, and high-temperature-resistant ceramic parts are required when design operating temperatures are significantly increased.

Pressurized cooling systems have been used to increase effective operating temperatures. Partially sealed systems using coolant reservoirs for coolant expansion if the engine overheats were introduced in the early 1970s. Specially formulated coolants that do not deteriorate over time eliminate the need for annual replacement.

Electrical system

The electrical system comprises a storage battery, generator, starting (cranking) motor, lighting system, ignition system, and various accessories and controls. Originally, the electrical system of the automobile was limited to the ignition equipment. With the advent of the electric starter on a 1912 Cadillac model, electric lights and horns began to replace the kerosene and acetylene lights and the bulb horns. Electrification was rapid and complete, and, by 1930, 6-volt systems were standard everywhere.

Increased engine speeds and higher cylinder pressures made it increasingly difficult to meet high ignition voltage requirements. The larger engines required higher cranking torque. Additional electrically operated features—such as radios, window regulators, and multispeed windshield wipers—also added to system requirements. To meet these needs, 12-volt systems replaced the 6-volt systems in the late 1950s around the world.

The ignition system provides the spark to ignite the air-fuel mixture in the cylinders of the engine. The system consists of the spark plugs, coil, distributor, and battery. In order to jump the gap between the electrodes of the spark plugs, the 12-volt potential of the electrical system must be stepped up to about 20,000 volts. This is done by a circuit that starts with the battery, one side of which is grounded on the chassis and leads through the ignition switch to the primary winding of the ignition coil and back to the ground through an interrupter switch. Interrupting the primary circuit induces a high voltage across the secondary terminal of the coil. The high-voltage secondary terminal of the coil leads to a distributor that acts as a rotary switch, alternately connecting the coil to each of the wires leading to the spark plugs.

Solid-state or transistorized ignition systems were introduced in the 1970s. These distributor systems provided increased durability by eliminating the frictional contacts between breaker points and distributor cams. The breaker point was replaced by a revolving magnetic-pulse generator in which alternating-current pulses trigger the high voltage needed for ignition by means of an amplifier electronic circuit. Changes in engine ignition timing are made by vacuum or electronic control unit (microprocessor) connections to the distributor.

The source of energy for the various electrical devices of the automobile is a generator, or alternator, that is belt-driven from the engine crankshaft. The design is usually an alternating-current type with built-in rectifiers and a voltage regulator to match the generator output to the electric load and also to the charging requirements of the battery, regardless of engine speed.

A lead-acid battery serves as a reservoir to store excess output of the generator. This provides energy for the starting motor and power for operating other electric devices when the engine is not running or when the generator speed is not sufficiently high for the load.

The starting motor drives a small spur gear so arranged that it automatically moves in to mesh with gear teeth on the rim of the flywheel as the starting-motor armature begins to turn. When the engine starts, the gear is disengaged, thus preventing damage to the starting motor from overspeeding. The starting motor is designed for high current consumption and delivers considerable power for its size for a limited time.

Headlights must satisfactorily illuminate the highway ahead of the automobile for driving at night or in inclement weather without temporarily blinding approaching drivers. This was achieved in modern cars with double-filament bulbs with a high and a low beam, called sealed-beam units. Introduced in 1940, these bulbs found widespread use following World War II. Such units could have only one filament at the focal point of the reflector. Because of the greater illumination required for high-speed driving with the high beam, the lower beam filament was placed off centre, with a resulting decrease in lighting effectiveness. Separate lamps for these functions can also be used to improve illumination effectiveness.

Dimming is automatically achieved on some cars by means of a photocell-controlled switch in the lamp circuit that is triggered by the lights of an oncoming car. Lamp clusters behind aerodynamic plastic covers permitted significant front-end drag reduction and improved fuel economy. In this arrangement, steerable headlights became possible with an electric motor to swivel the lamp assembly in response to steering wheel position. The regulations of various governments dictate brightness and field of view requirements for vehicle lights.

Signal lamps and other special-purpose lights have increased in usage since the 1960s. Amber-coloured front and red rear signal lights are flashed as a turn indication; all these lights are flashed simultaneously in the “flasher” (hazard) system for use when a car is parked along a roadway or is traveling at a low speed on a high-speed highway. Marker lights that are visible from the front, side, and rear also are widely required by law. Red-coloured rear signals are used to denote braking, and cornering lamps, in connection with turning, provide extra illumination in the direction of an intended turn. Backup lights provide illumination to the rear and warn anyone behind the vehicle when the driver is backing up. High-voltage light-emitting diodes (LEDs) have been developed for various signal and lighting applications.

Transmission

The gasoline engine must be disconnected from the driving wheels when it is started and when idling. This characteristic necessitates some type of unloading and engaging device to permit gradual application of load to the engine after it has been started. The torque, or turning effort, that the engine is capable of producing is low at low crankshaft speeds, increasing to a maximum at some fairly high speed representing the maximum, or rated, horsepower.

The efficiency of an automobile engine is highest when the load on the engine is high and the throttle is nearly wide open. At moderate speeds on level pavement, the power required to propel an automobile is only a fraction of this. Under normal driving conditions at constant moderate speed, the engine may operate at an uneconomically light load unless some means is provided to change its speed and power output.

The transmission is such a speed-changing device. Installed in the power train between the engine and the driving wheels, it permits the engine to operate at a higher speed when its full power is needed and to slow down to a more economical speed when less power is needed. Under some conditions, as in starting a stationary vehicle or in ascending steep grades, the torque of the engine is insufficient, and amplification is needed. Most devices employed to change the ratio of the speed of the engine to the speed of the driving wheels multiply the engine torque by the same factor by which the engine speed is increased.

The simplest automobile transmission is the sliding-spur gear type with three or more forward speeds and reverse. The desired gear ratio is selected by manipulating a shift lever that slides a spur gear into the proper position to engage the various gears. A clutch is required to engage and disengage gears during the selection process. The necessity of learning to operate a clutch is eliminated by an automatic transmission. Most automatic transmissions employ a hydraulic torque converter, a device for transmitting and amplifying the torque produced by the engine. Each type provides for manual selection of reverse and low ranges that either prevent automatic upshifts or employ lower gear ratios than are used in normal driving. Grade-retard provisions are also sometimes included to supply dynamic engine braking on hills. Automatic transmissions not only require little skill to operate but also make possible better performance than is obtainable with designs that require clutch actuation.

In hydraulic transmissions, shifting is done by a speed-sensitive governing device that changes the position of valves that control the flow of hydraulic fluid. The vehicle speeds at which shifts occur depend on the position of the accelerator pedal, and the driver can delay upshifts until higher speed is attained by depressing the accelerator pedal further. Control is by hydraulically engaged bands and multiple-disk clutches running in oil, either by the driver’s operation of the selector lever or by speed- and load-sensitive electronic control in the most recent designs. Compound planetary gear trains with multiple sun gears and planet pinions have been designed to provide a low forward speed, intermediate speeds, a reverse, and a means of locking into direct drive. This unit is used with various modifications in almost all hydraulic torque-converter transmissions. All transmission control units are interconnected with vehicle emission control systems that adjust engine timing and air-to-fuel ratios to reduce exhaust emissions.

Oil in the housing is accelerated outward by rotating vanes in the pump impeller and, reacting against vanes in the turbine impeller, forces them to rotate, as shown schematically in the figure. The oil then passes into the stator vanes, which redirect it to the pump. The stator serves as a reaction member providing more torque to turn the turbine than was originally applied to the pump impeller by the engine. Thus, it acts to multiply engine torque by a factor of up to 2 1/2 to 1.

Blades in all three elements are specially contoured for their specific function and to achieve particular multiplication characteristics. Through a clutch linkage, the stator is allowed gradually to accelerate until it reaches the speed of the pump impeller. During this period torque multiplication gradually drops to approach 1 to 1.

The hydraulic elements are combined with two or more planetary gear sets, which provide further torque multiplication between the turbine and the output shaft.

Continuously (or infinitely) variable transmissions provide a very efficient means of transferring engine power and, at the same time, automatically changing the effective input-to-output ratio to optimize economy by keeping the engine running within its best power range. Most designs employ two variable-diameter pulleys connected by either a steel or high-strength rubber V-belt. The pulleys are split so that effective diameters may be changed by an electrohydraulic actuator to change the transmission ratio. This permits the electronic control unit to select the optimum ratio possible for maximum fuel economy and minimum emissions at all engine speeds and loads. Originally these units were limited to small cars, but belt improvements have made them suitable for larger cars.

Additional Information

The four-wheeled transportation vehicle symbolizes the promise and the pitfalls of the modern age.

An automobile is a self-propelled motor vehicle intended for passenger transportation on land. It usually has four wheels and an internal combustion engine fueled most often by gasoline, a liquid petroleum product. Known more commonly as a car, formerly as a motorcar, it is one of the most universal of modern technologies, manufactured by one of the world’s largest industries. More than 73 million new automobiles were produced worldwide in the year 2017.

The scientific and technical building blocks of the automobile go back several hundred years. For example, in the late 1600s, Dutch scientist Christiaan Huygens invented a type of internal combustion engine sparked by gunpowder. The “horseless carriage” in its modern form had been developed by the end of the 19th century. At that time, it was not clear which of three fuel sources would become most commercially successful: steam, electric power, or gasoline. Cars run by steam engines could go at high speeds but had a short range and were inconvenient to start. Battery-powered electric cars had a 38 percent share of the United States automobile market in 1900, but they also had a limited range and recharging stations were hard to find.

The gasoline-powered automobile won the competition. By 1920, it had overtaken the streets and byways of Europe and the United States. The manufacturing methods introduced by U.S. carmaker Henry Ford revolutionized industrial manufacturing. Ford was the first to install assembly lines in his factory to speed up production. Such techniques reduced the price of Ford’s Model T until it became affordable for most middle-class families. As the 20th century progressed, modern life came to seem increasingly inconceivable, or at least highly inconvenient, without access to a car. Nowadays, the U.S. population drives more than 4.8 trillion kilometers (three trillion miles) every year on average.

But this fundamental component of industrial and consumer society has played a major role in destabilizing Earth’s atmosphere, on which all living things depend. The average automobile emits between four and nine tons (3,629 to 8,165 kilograms; 8,000 to 18,000 pounds) of carbon dioxide and other greenhouse gases per year. Every gallon of gasoline burned to operate a car emits just under 9.1 kilograms (20 pounds) of carbon dioxide. The transportation sector as a whole, including cars, trucks, trains, and aircraft, became the largest source of U.S. greenhouse gas emissions in 2017. Air pollution from automobile exhaust is also a major problem, as are car accidents, which killed more than 100 people per day in the United States in 2016, according to the National Highway Traffic Safety Administration.

:max_bytes(150000):strip_icc():format(webp)/GettyImages-1186091457-0c53b6749ceb49b79053f61f583eef03.jpg)

#2 Jokes » Nut Jokes - IV » Today 00:06:25

- Jai Ganesh

- Replies: 0

Q: How do you catch a squirrel interested in ornithology?

A: Climb a tree and act like a nuthatch (Sitta carolinensis).

* * *

Q: How do you catch a Polynesian squirrel?

A: Climb a tree and act like a coconut.

* * *

Q: How can you catch a little squirrel?

A: Climb a tree and pretend to be an almond (botanically speaking, almonds are fruits).

* * *

Q: How do you catch a squirrel with a Katy Perry fixation?

A: Climb a tree and act like a chestnut.

* * *

Q: How do you catch a mechanically inclined squirrel?

A: Climb a tree and act like a 9/16 12N nut.

* * *

#3 Re: Dark Discussions at Cafe Infinity » crème de la crème » Today 00:05:54

2459) Edward Mills Purcell

Gist:

Life

Edward Mills Purcell was born in Taylorville, Illinois. His father worked for a telephone company. Purcell studied electrical engineering at Purdue University in Indiana and physics at Harvard University. During World War II Purcell worked on the development of radar at MIT, but he returned afterwards to Harvard, where he did his Nobel Prize-awarded work and continued to work for the rest of his career. Purcell was married and had two sons.

Work

Protons and neutrons in nuclei act like small, rotating magnets. Atoms and molecules therefore align in a magnetic field. Radio waves can disturb their direction of rotation, but only in certain stages, in accordance with quantum mechanics. When the atoms return to their original positions, they emit electromagnetic radio waves with frequencies characteristic of different elements and isotopes. In 1946, Edward Purcell and Felix Bloch developed methods for precise measurement, making it possible to study different materials’ compositions.

Summary

E.M. Purcell (born Aug. 30, 1912, Taylorville, Ill., U.S.—died March 7, 1997, Cambridge, Mass.) was an American physicist who shared, with Felix Bloch of the United States, the Nobel Prize for Physics in 1952 for his independent discovery (1946) of nuclear magnetic resonance in liquids and in solids. Nuclear magnetic resonance (NMR) has become widely used to study the molecular structure of pure materials and the composition of mixtures.

During World War II Purcell headed a group studying radar problems at the Radiation Laboratory of the Massachusetts Institute of Technology, Cambridge. In 1946 he developed his NMR detection method, which was extremely accurate and a major improvement over the atomic-beam method devised by the American physicist Isidor I. Rabi.

Purcell became professor of physics at Harvard University in 1949 and in 1952 detected the 21-centimetre-wavelength radiation emitted by neutral atomic hydrogen in interstellar space. Such radio waves had been predicted by the Dutch astronomer H.C. van de Hulst in 1944, and their study enabled astronomers to determine the distribution and location of hydrogen clouds in galaxies and to measure the rotation of the Milky Way. In 1960 Purcell became Gerhard Gade professor at Harvard, and in 1979 he received the National Medal of Science. In 1980 he became professor emeritus.

Details

Edward Mills Purcell (August 30, 1912 – March 7, 1997) was an American physicist who shared the 1952 Nobel Prize for Physics for his independent discovery (published 1946) of nuclear magnetic resonance in liquids and in solids. Nuclear magnetic resonance (NMR) has become widely used to study the molecular structure of pure materials and the composition of mixtures. Friends and colleagues knew him as Ed Purcell.

Biography

Born and raised in Taylorville, Illinois, Purcell received his BSEE in electrical engineering from Purdue University, followed by his M.A. and Ph.D. in physics from Harvard University. He was a member of the Alpha Xi chapter of the Phi Kappa Sigma fraternity while at Purdue. After spending the years of World War II working at the MIT Radiation Laboratory on the development of microwave radar, Purcell returned to Harvard to do research. In December 1945, he discovered nuclear magnetic resonance (NMR) with his colleagues Robert Pound and Henry Torrey. NMR provides scientists with an elegant and precise way of determining chemical structure and properties of materials, and is widely used in physics and chemistry. It also is the basis of magnetic resonance imaging (MRI), one of the most important medical advances of the 20th century. For his discovery of NMR, Purcell shared the 1952 Nobel Prize in physics with Felix Bloch of Stanford University.

Purcell also made contributions to astronomy as the first to detect radio emissions from neutral galactic hydrogen (the famous 21 cm line due to hyperfine splitting), affording the first views of the spiral arms of the Milky Way. This observation helped launch the field of radio astronomy, and measurements of the 21 cm line are still an important technique in modern astronomy. He has also made seminal contributions to solid state physics, with studies of spin-echo relaxation, nuclear magnetic relaxation, and negative spin temperature (important in the development of the laser). With Norman F. Ramsey, he was the first to question the CP symmetry of particle physics.

Purcell was the recipient of many awards for his scientific, educational, and civic work. He served as science advisor to Presidents Dwight D. Eisenhower, John F. Kennedy, and Lyndon B. Johnson. He was president of the American Physical Society, and a member of the American Philosophical Society, the National Academy of Sciences, and the American Academy of Arts and Sciences. He was awarded the National Medal of Science in 1979, and the Jansky Lectureship before the National Radio Astronomy Observatory. Purcell was also inducted into his Fraternity's (Phi Kappa Sigma) Hall of Fame as the first Phi Kap ever to receive a Nobel Prize.

Purcell was the author of the innovative introductory text Electricity and Magnetism. The book, a Sputnik-era project funded by an NSF grant, was influential for its use of relativity in the presentation of the subject at this level. The 1965 edition, now freely available due to a condition of the federal grant, was originally published as a volume of the Berkeley Physics Course. The book is also in print as a commercial third edition, as Purcell and Morin. Purcell is also remembered by biologists for his famous lecture "Life at Low Reynolds Number", in which he explained forces and effects dominating in limiting flow regimes (often at the micro scale). He also emphasized the time-reversibility of low Reynolds number flows with a principle referred to as the Scallop theorem.

Purcell died on March 7, 1997, in Cambridge, Massachusetts, aged 84.

#4 Dark Discussions at Cafe Infinity » Comedy Quotes - III » Today 00:05:36

- Jai Ganesh

- Replies: 0

Comedy Quotes - III

1. The male is always the pawn in a romantic comedy. Come together, break up, go chase her, get her, roll credits. That's what happens in all of them. - Matthew McConaughey

2. When I tried to branch out into comedy, I didn't do very well at it, so I went back to doing what I do naturally well, or what the audience expects from me - action pictures. - Sylvester Stallone

3. As for doing more dramatic work over comedy, I do whatever turns me on at the moment. - Sandra Bullock

4. You know, if you look all my stuff... If you go back to 'Saturday Night Live,' my stuff always has music, even a bunch of my comedy stuff - like in 'Shrek,' the donkey is always singing. Music is always there. - Eddie Murphy

5. All I need to make a comedy is a park, a policeman and a pretty girl. - Charlie Chaplin.

#5 This is Cool » Silver Nitrate » Yesterday 17:40:56

- Jai Ganesh

- Replies: 0

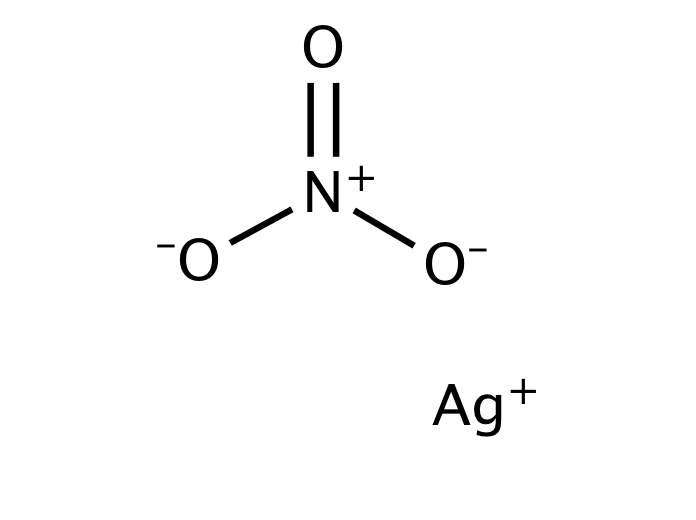

Silver Nitrate

Gist

Silver nitrate is a colorless, odorless inorganic compound commonly used as a versatile chemical precursor in photography, electroplating, and for manufacturing mirrors. It is widely used in medicine as a topical antiseptic and cauterizing agent for wound care and warts. It is prepared by dissolving silver in nitric acid and must be handled with care due to its corrosive nature.

Silver nitrate is used medically as an antiseptic, antibacterial, and cauterizing agent for wound care, wart removal, and controlling bleeding by releasing silver ions that kill microbes and form protective scabs, while industrially it serves as a precursor for silver compounds, in photographic emulsions, and for analytical chemistry (like testing for halides). It is applied topically to treat wounds, burns, and skin tags, though its use in newborns for eye infections is largely replaced by other treatments in the U.S.

Summary

Details

Silver nitrate is an inorganic compound with chemical formula AgNO3. It is a versatile precursor to many other silver compounds, such as those used in photography. It is far less sensitive to light than the halides.[citation needed] It was once called lunar caustic because silver was called luna by ancient alchemists who associated silver with the moon. In solid silver nitrate, the silver ions are three-coordinated in a trigonal planar arrangement.

Uses:

Precursor to other silver compounds

Silver nitrate is the least expensive salt of silver; it offers several other advantages as well. It is non-hygroscopic, in contrast to silver fluoroborate and silver perchlorate. In addition, it is relatively stable to light, and it dissolves in numerous solvents, including water. The nitrate can be easily replaced by other ligands, rendering AgNO3 versatile. Treatment with solutions of halide ions gives a precipitate of AgX (X = Cl, Br, I). When making photographic film, silver nitrate is treated with halide salts of sodium or potassium to form insoluble silver halide in situ in photographic gelatin, which is then applied to strips of tri-acetate or polyester. Similarly, silver nitrate is used to prepare some silver-based explosives, such as the fulminate, azide, or acetylide, through a precipitation reaction.

Halide abstraction

The silver cation, Ag+, reacts quickly with halide sources to produce the insoluble silver halide, which is a cream precipitate if Br− is used, a white precipitate if Cl− is used and a yellow precipitate if I− is used. This reaction is commonly used in inorganic chemistry to abstract halides.

Other silver salts with non-coordinating anions, namely silver tetrafluoroborate and silver hexafluorophosphate are used for more demanding applications.

Similarly, this reaction is used in analytical chemistry to confirm the presence of chloride, bromide, or iodide ions. Samples are typically acidified with dilute nitric acid to remove interfering ions, e.g. carbonate ions and sulfide ions. This step avoids confusion of silver sulfide or silver carbonate precipitates with that of silver halides. The color of precipitate varies with the halide: white (silver chloride), pale yellow/cream (silver bromide), yellow (silver iodide). AgBr and especially AgI photo-decompose to the metal, as evidenced by a grayish color on exposed samples.

The same reaction was used on steamships in order to determine whether or not boiler feedwater had been contaminated with seawater. It is still used to determine if moisture on formerly dry cargo is a result of condensation from humid air, or from seawater leaking through the hull.

Organic synthesis

Silver nitrate is used in many ways in organic synthesis, e.g. for deprotection and oxidations. Ag+ binds alkenes reversibly, and silver nitrate has been used to separate mixtures of alkenes by selective absorption. The resulting adduct can be decomposed with ammonia to release the free alkene. Silver nitrate is highly soluble in water but is poorly soluble in most organic solvents, except acetonitrile (111.8 g/100 g, 25 °C).

Biology

In histology, silver nitrate is used for silver staining, for demonstrating reticular fibers, proteins and nucleic acids. For this reason it is also used to demonstrate proteins in polyacrylamide gel electrophoresis (PAGE) gels. It can be used as a stain in scanning electron microscopy.

Cut flower stems can be placed in a silver nitrate solution, which prevents the production of ethylene. This delays ageing of the flower.

Indelible ink

Silver nitrate produces long-lasting stain when applied to skin and is one of indelible ink’s ingredients. An electoral stain makes use of this to mark a finger of people who have voted in an election, allowing easy identification to prevent double-voting.

In addition to staining skin, silver nitrate has a history of use in stained glass. In the 14th century, artists began using a "silver stain" (also known as a yellow stain) made from silver nitrate to create a yellow effect on clear glass. The stain would produce a stable color that could range from pale lemon to deep orange or gold. Silver stain was often used with glass paint, and was applied to the opposite side of the glass as the paint. It was also used to create a mosaic effect by reducing the number of pieces of glass in a window. Despite the age of the technique, this process of creating stained glass remains almost entirely unchanged.

Medicine:

Silver salts have antiseptic properties. In 1881 Credé introduced a method known as Credé's prophylaxis, which used of dilute (2%) solutions of silver nitrate in newborn babies' eyes at birth to prevent contraction of gonorrhea from the mother, which could cause blindness via ophthalmia neonatorum. (Modern antibiotics are now used instead).

Fused silver nitrate, shaped into sticks, was traditionally called "lunar caustic". It is used as a cauterizing agent, for example to remove granulation tissue around a stoma. General Sir James Abbott noted in his journals that in India in 1827 it was infused by a British surgeon into wounds in his arm resulting from the bite of a mad dog to cauterize the wounds and prevent the onset of rabies.

Silver nitrate is used to cauterize superficial blood vessels in the nose to help prevent nosebleeds.

Dentists sometimes use silver nitrate-infused swabs to heal oral ulcers. Silver nitrate is used by some podiatrists to kill cells located in the nail bed.

The Canadian physician C. A. Douglas Ringrose researched the use of silver nitrate for sterilization procedures, believing that silver nitrate could be used to block and corrode the fallopian tubes. The technique was ineffective.

Disinfection

Much research has been done in evaluating the ability of the silver ion at inactivating Escherichia coli, a microorganism commonly used as an indicator for fecal contamination and as a surrogate for pathogens in drinking water treatment. Concentrations of silver nitrate evaluated in inactivation experiments range from 10–200 micrograms per liter as Ag+. Silver's antimicrobial activity saw many applications prior to the discovery of modern antibiotics, when it fell into near disuse. Its association with argyria made consumers wary and led them to turn away from it when given an alternative.

Against warts

Repeated daily application of silver nitrate can induce adequate destruction of cutaneous warts, but occasionally pigmented scars may develop. In a placebo-controlled study of 70 patients, silver nitrate given over nine days resulted in clearance of all warts in 43% and improvement in warts in 26% one month after treatment compared to 11% and 14%, respectively, in the placebo group.

Safety

As an oxidant, silver nitrate should be properly stored away from organic compounds. It reacts explosively with ethanol. Despite its common usage in extremely low concentrations to prevent gonorrhea and control nosebleeds, silver nitrate is still very toxic and corrosive. Brief exposure will not produce any immediate side effects other than the purple, brown or black stains on the skin, but upon constant exposure to high concentrations, side effects will be noticeable, which include burns. Long-term exposure may cause eye damage. Silver nitrate is known to be a skin and eye irritant. Silver nitrate has not been thoroughly investigated for potential carcinogenic effect.

Silver nitrate is currently unregulated in water sources by the United States Environmental Protection Agency. However, if more than 1 gram of silver is accumulated in the body, a condition called argyria may develop. Argyria is a permanent cosmetic condition in which the skin and internal organs turn a blue-gray color. The United States Environmental Protection Agency used to have a maximum contaminant limit for silver in water until 1990, when it was determined that argyria did not impact the function of any affected organs despite the discolouration. Argyria is more often associated with the consumption of colloidal silver solutions rather than with silver nitrate, since it is only used at extremely low concentrations to disinfect the water. However, it is still important to be wary before ingesting any sort of silver-ion solution.

Additional Information

Silver nitrate is a chemical compound with the formula AgNO3. It consists of an ionic bond between the silver cation (Ag+) and the nitrate anion (NO3–). Due to the ionic nature of this compound, it readily dissolves in water and dissociates into its constituent ions.

Silver nitrate is a precursor to many compounds of silver, including the silver compounds used in photography. When compared to silver halides, which are used in photography due to their sensitivity to light, AgNO3 is quite stable when exposed to light.

Structure of AgNO3

The nitrate ion described above consists of one nitrogen atom which is surrounded by three oxygen atoms. The nitrogen-oxygen bonds in this ion are similar to each other. The formal charge assigned to the nitrogen atom is -1, whereas each oxygen atom holds a charge of -⅔. The net charge associated with the nitrate ion is -1, which is quenched by the +1 charge held by the Ag+ ion via an ionic bond in AgNO3. It can be noted that the structure of the nitrate ion is stabilized by resonance.

Properties of Silver Nitrate

Some important physical and chemical properties of silver nitrate are listed in this subsection.

Physical Properties

* The molar mass of silver nitrate is 169.872 grams per mole.

* AgNO3 has a colourless appearance in its solid-state and is odourless.

* In its solid state, it has a density of 4.35 grams per cubic centimetre. Its density in the liquid state at a temperature of 210 degrees C corresponds to 3.97 g/{cm}^{3}.

* The melting and boiling points of silver nitrate are 482.8 K and 713 K respectively. However, this compound tends to decompose at temperatures approaching its boiling point.

* Silver nitrate, like most ionic compounds, dissolves readily in water. Its solubility in water corresponds to 122 g /100mL at 0 oC and 256g / 100mL at a temperature of 25 degrees centigrade.

* The crystal structure of AgNO3 is orthorhombic.

Chemical Properties

* The hazards of AgNO3 include its toxic and corrosive nature.

* The reaction between silver nitrate and ethanol is explosive.

* The silver present in the silver nitrate compound is displaced by copper, which forms copper nitrate. The chemical equation for this reaction is given by

2AgNO3 + Cu → Cu(NO3)2 + 2Ag

* When heated to 440 oC, this compound completely decomposes to give oxygen, nitrogen dioxide, and silver.

* Silver nitrate on decomposition gives silver, oxygen gas and nitrite.

* It can be noted that even though metal nitrates generally decompose to yield metal oxides, the decomposition reaction of silver nitrate gives rise to elemental silver because silver oxide decomposes at an even lower temperature than AgNO3.

Uses of Silver Nitrate

Silver nitrate has a wide range of applications in many fields such as biology, chemical synthesis, and medicine. Some of these uses of AgNO3 are listed below.

* Silver nitrate is a very versatile compound because the nitrate ion can be replaced by other ligands that can bind to the silver ion.

* Due to the ability of this compound to form a precipitate of silver halides when treated with halide ions, it is used while making photographic films.

* Many silver-based explosives can be prepared with a precipitation reaction of silver nitrate.

* In the field of inorganic chemistry, halides are extracted with the help of this compound.

* The branch of chemistry known as analytical chemistry uses this reaction to check for the presence of halide anions such as the iodide, bromide, or chloride ions.

* Mixtures of alkenes can be separated with the help of this compound since the silver cation binds with alkenes in a reversible fashion.

* When diluted with water to a concentration of 0.5%, silver nitrate can serve as an antiseptic in many medical setups.

* A diluted solution of AgNO3 can be administered to the eyes of a baby which is born to a mother suffering from gonorrhea, which combats the gonorrhoea bacteria and protects the baby from the onset of blindness.

* This compound is also known to be used for the treatment and the removal of unwanted warts in human beings.

Frequently Asked Questions:

What are the uses of silver nitrate?

Silver nitrate is widely used in many organic synthesis reactions in several ways. For example, for the deprotection and oxidation reactions. The Ag+ ion reversibly binds alkenes, and selectively adsorbing silver nitrate can be used to isolate alkene mixtures. The resulting adduct can be decomposed (in order to release the free alkene) with ammonia. Silver nitrate has been, in the past, used for silver staining (a process that employs silver or silver compounds to selectively change the appearance of a specific object). This compound is also used in medicine owing to its antiseptic qualities.

Is silver nitrate dangerous?

Silver nitrate is an oxidant and must, therefore, be kept away from organic compounds. Despite its widespread usage (especially in extremely low amounts) for the prevention of gonorrhoea and to stop bleeding from the nose, silver nitrate is often highly toxic and corrosive. Short-term exposure to this compound does not cause any immediate side effects apart from the development of a violet, brown or black stain on the part of the skin that was in contact with the silver nitrate. However, exposure to this compound over long periods of time is often accompanied by damage to the eyes. This compound is widely classified as an irritant to the skin and the eyes.

How is silver nitrate prepared?

Silver nitrate is usually prepared by combining silver with nitric acid. Common silver objects used in these reactions include silver bullions and silver foils. The products formed in this reaction include silver nitrate, water, and nitrogen oxides. The by-products of this chemical reaction depend on the nitric acid concentration that is used. It is important to note that this reaction must be carried out under a fume hood because of the evolution of poisonous oxides of nitrogen during the chemical reaction.

#6 Re: Jai Ganesh's Puzzles » General Quiz » Yesterday 16:46:19

Hi,

#10791. What does the term in Biology Immunity mean?

#10792. What does the term in Biology Immunoglobulin mean?

#7 Re: Jai Ganesh's Puzzles » English language puzzles » Yesterday 16:30:56

Hi,

#5997. What does the noun lagoon mean?

#5998. What does the noun laissez faire mean?

#8 Re: Jai Ganesh's Puzzles » Doc, Doc! » Yesterday 16:21:20

Hi,

#2595. In which part of human body is Cuneus situated?

#9 Science HQ » Ferric Sulfate » Yesterday 16:13:32

- Jai Ganesh

- Replies: 0

Ferric Sulfate

Gist

Ferric sulfate is a yellowish-brown or grayish-white inorganic salt used primarily as a coagulant in water treatment, a pigment, and a hemostatic agent in dentistry. It is highly acidic, produced by oxidizing iron(II) sulfate with sulfuric acid. It acts as an astringent.

The main function of ferric sulfate is as a hemostatic agent in different medical practices. This hemostatic function is achieved when ferric sulfate is applied directly in the damaged tissue. Once applied, ferric sulfate forms ferric ion-protein complex which helps the sealing of the damaged vessels mechanically.

Summary

Iron(III) sulfate or ferric sulfate (British English: sulphate instead of sulfate) is a family of inorganic compounds with the formula Fe2(SO4)3(H2O)n. A variety of hydrates are known, including the most commonly encountered form of "ferric sulfate". Solutions are used in dyeing as a mordant and as a coagulant for industrial wastes. Solutions of ferric sulfate are also used in the processing of aluminum and steel.

Production

Ferric sulfate solutions are usually generated from iron wastes. The actual identity of the iron species is often vague, but many applications do not demand high-purity materials. It is produced on a large scale by treating sulfuric acid, a hot solution of ferrous sulfate, and an oxidizing agent. Typical oxidizing agents include chlorine, nitric acid, and hydrogen peroxide.

Details

Ferric sulfate has the molecular formula of Fe2SO4, and it is a dark brown or yellow chemical agent with acidic properties. It is produced by the reaction of sulfuric acid and an oxidizing agent. It is used in different fields such as dermatology, dentistry and it is thought to present hemostatic properties by interacting chemically with blood proteins. By the FDA, ferric sulfate is a direct food substance affirmed in the GRAS category (Generally Recognized As Safe).

Indication

Ferric sulfate was first used in dermatology as part of the Monsel's solution. This solution is an antihemorrhagic agent used in skin and mucosal biopsies. The use of ferric sulfate in dermatology is under review as ferric sulfate is corrosive and injurious and it can cause degenerative changes that are not observed with other alternatives like collagen.

Ferric sulfate is also used as a coagulative and hemostatic agent. It is a mechanic hemostatic agent used directly on the damaged tissue.

In dentistry, ferric sulfate is used as a pulpotomy medicament to control pulpal bleeding, as an antibacterial agent and as a hemostatic reagent for restorative dentistry, for postextraction hemorrhage and for periradicular and endodontic surgery.

Pharmacodynamics

The administration of ferric sulfate as a dermatologic agent has showed delayed reepithelialization and dyspigmentation. Some studies have reported the generation of inflammation in the sites of administration of ferric sulfate.

Mechanism of action

The main function of ferric sulfate is as a hemostatic agent in different medical practices. This hemostatic function is achieved when ferric sulfate is applied directly in the damaged tissue. Once applied, ferric sulfate forms ferric ion-protein complex which helps the sealing of the damaged vessels mechanically. The formation of agglutinated protein complexes produces the generation of occlusion in the capillary orifices. The formation of the ferric protein complex is thought to be due to a chemical reaction between the acidic form of ferric sulfate and the blood proteins.

Absorption

Pharmacokinetic studies related to the absorption of ferric sulfate have not been performed.

Volume of distribution

Pharmacokinetic studies related to the volume of distribution of ferric sulfate have not been performed.

Protein binding

Ferric sulfate presents very high protein binding properties, this property is thought to be due to its acidic profile.

Metabolism

Pharmacokinetic studies related to the metabolism of ferric sulfate have not been performed.

Additional Information

Ferric sulfate appears as a yellow crystalline solid or a grayish-white powder. The primary hazard is the threat to the environment. Immediate steps should be taken to limit its spread to the environment. It is used for water purification, and as a soil conditioner.

Iron(3+) sulfate is a compound of iron and sulfate in which the ratio of iron(3+) to sulfate ions is 3:2. It has a role as a catalyst, a mordant and an astringent. It is an iron molecular entity and a metal sulfate. It contains an iron(3+).

Ferric sulfate has the molecular formula of Fe2SO4, and it is a dark brown or yellow chemical agent with acidic properties. It is produced by the reaction of sulfuric acid and an oxidizing agent. It is used in different fields such as dermatology, dentistry and it is thought to present hemostatic properties by interacting chemically with blood proteins. By the FDA, ferric sulfate is a direct food substance affirmed in the GRAS category (Generally Recognized As Safe).

The main function of ferric sulfate is as a hemostatic agent in different medical practices. This hemostatic function is achieved when ferric sulfate is applied directly in the damaged tissue. Once applied, ferric sulfate forms ferric ion-protein complex which helps the sealing of the damaged vessels mechanically.

Iron(III) Sulfate is an inorganic compound that is also termed ferric sulfate. Its chemical formula is Fe2(SO4)3. In iron III sulfate, each iron atom has ionic bonds with the sulfate. A variety of hydrates of iron III sulfate are known, such as nonahydrate, anhydrous monohydrate, etc. In fact, they are the most commonly encountered form of "Iron III Sulfate". It is slightly soluble in water and very hygroscopic. It is sparingly soluble in alcohol and negligibly soluble in acetone and ethyl acetate. It is not soluble in sulfuric acid and ammonia. As iron III sulfate is insoluble in sulfuric acid, it is used for producing iron III sulfate. It emits toxic fumes of iron and sulfur oxide when heated to decomposition. It is a threat to the environment and immediate steps should be taken to control its spread in the environment. It is used as a coagulant in water purification, as an astringent, and as a soil conditioner. It is corrosive to copper, copper alloys, mild steel, and galvanized steel.

Uses of Iron III Sulfate

* Iron III Sulfate is mainly used as a coagulant in water purification and sewage treatment.

* The solution of ferric sulfate is used as a mordant in dyeing and calico printing.

* It is also used in the preparation of iron salts and pigments, in the ferric salt leaching process, in soil conditioners, and in the coal conversion process.

* It acts as a disinfectant, polymerization catalyst, and hemostatic agent for endodontic surgery.

* It is also used in etching aluminium and in pickling stainless steel and copper.

* It is also used as a solids removal agent and oxidizing agent.

Hazards

* Iron III sulfate is a threat to the environment and immediate steps should be taken to control its spread in the environment.

* Though Iron III Sulfate is a stable, not flammable compound, it emits toxic fumes of iron and sulfur oxide when heated to decomposition.

* Prolonged exposure to Iron III Sulfate is toxic to the lungs and mucous membranes and may cause damage to them.

* Contact with this causes skin irritation and may also cause an allergic skin reaction.

* Inhalation of its dust irritates the nose and throat, and its ingestion irritates the mouth and stomach.

* It is harmful to aquatic life with long-lasting effects.

#10 Re: Jai Ganesh's Puzzles » 10 second questions » Yesterday 15:26:16

Hi,

#9881.

#11 Re: Jai Ganesh's Puzzles » Oral puzzles » Yesterday 15:01:00

Hi,

#6374.

#12 Re: Exercises » Compute the solution: » Yesterday 14:36:04

Hi,

2735.

#13 Re: This is Cool » Miscellany » Yesterday 00:04:26

2521) Odometer

Gist

An odometer is an instrument on a vehicle's dashboard that measures and displays the total distance traveled, typically in miles or kilometers. It works via mechanical gears or electronic sensors (on modern cars) tracking wheel rotations to determine distance, crucial for maintenance tracking and resale value.

Odometer includes the root from the Greek word hodos, meaning "road" or "trip". An odometer shares space on your dashboard with a speedometer, a tachometer, and maybe a "tripmeter". The odometer is what crooked car salesmen tamper with when they want to reduce the mileage a car registers as having traveled.

Summary

An odometer is a device that registers the distance traveled by a vehicle. Modern digital odometers use a computer chip to track mileage. They make use of a magnetic or optical sensor that tracks pulses of a wheel that connects to a vehicle’s tires. This data is stored in the engine control module (ECM). Odometers use these stored values to determine the total distance traveled by a vehicle.

Analog or mechanical odometers consist of a train of gears (with a gear ratio of 1,000:1) that causes a drum, classified in tenths of a mile or kilometre, to make one turn per mile or kilometre. A series of usually six such drums is arranged in such a way that one of the numerals on each drum is visible in a rectangular window. The drums are coupled so that 10 revolutions of the first cause one revolution of the second, and so forth, with the numbers appearing in the window representing the vehicle’s accumulated mileage.

The Roman architect and engineer Vitruvius is credited with inventing the initial version of an odometer in 15 bce. The concept consisted of a chariot wheel that turned 400 times to show one Roman mile. This wheel was mounted in a frame with a 400-tooth cogwheel. For every 400 rotations of the chariot wheel, the cogwheel would drop one pebble. In 1642 the French mathematician Blaise Pascal used the same principle to create an apparatus that used gears and wheels. For every 10 rotations of a gear, a second gear advanced one place. The modern odometer was invented about 1847 by pioneers William Clayton and Orson Pratt, members of the Church of Jesus Christ of Latter-day Saints. They attached their apparatus to a wagon wheel while they traversed the plains from Nebraska to the Great Salt Lake valley.

Details

An automobile’s most prominent yet unexplored part is the odometer. It is placed behind the steering wheel on the dashboard. It displays the distance the car has run. Odometer readings are beneficial to car owners when selling the vehicle. It helps evaluate the mileage or plans for car service.

Odometers can be mechanical, electrical, or a combination of the two. They are also known as mileometer or milometers in countries with imperial units or US customary units. Odometer is the most widely used name, especially in the UK and the Commonwealth countries.

Meaning

An odometer is a device used to measure the displacement of an object. It measures the distance travelled between the start point and the endpoint. Odometer is derived from two Greek words that mean path and measure.

Who invented the odometer?

Vitruvius, a Roman architect and engineer, is credited for the invention of the odometer in the 15th century. He used a standard chariot wheel, mounted on a frame with a 400-teeth cogwheel, and turned it 400 times in a Roman mile. The cogwheel employed a gear that slipped a stone into the box for every mile. Thus, it helped learn the miles covered by counting the pebbles.

In the 16th century, Blaise Pascal invented a calculating machine called Pascaline. It was a prototype of an odometer—the Pascaline comprised gears and wheels, where each gear had ten teeth. Every time a tooth completed a revolution, the second gear was engaged. This principle is used in the mechanical odometer.

English military engineer Thomas Savery invented an odometer for ships. In 1775, Ben Franklin, a statesman and a writer, created a simple odometer that measured the mileage of the routes. He attached it to his carriage.

In 1847, the Mormon Pioneers invented an odometer while crossing the plains from Missouri to Utah. Also known as a roadometer, they attached it to the wagon’s wheel, and when the wagon started the journey, it counted the wheel revolutions. Orson Pratt and William Clayton designed the odometer, and Appleton Milo Harmon, the carpenter, built it.

In 1854, Nova Scotia’s Samuel McKeen designed another early version of the odometer. The device measured driven mileage. He attached the device to the carriage side and measured the miles with wheels turning.

Types of odometer:

There are two types of odometers.

1) Mechanical odometers

2) Electronic odometers

Mechanical odometers

Mechanical odometers start with the transmission. The transmission system contains a small gear that measures the odometer advancing. This small gear is connected to the speedometer drive cable. The other end of this cable is connected to the instrument cluster.

The internal transmission gear turns when the engine is turned on, and the car starts moving. This internal transmission gear motion is conveyed to another set of gears linked to changeable digits by the connected drive cable. Thus, the counting begins from the right side of the group of numbers.

The process continues till the distance travelled by automobile compels the left side digits to roll over. This counting process repeats until all the adjacent numbers touch their apex values. Then, all the digits are set back to zero, and it starts again.

Mechanical odometers are not always precise and a hundred per cent accurate.

Electronic odometers

After the mechanical odometers came the electronic ones. They are also known as digital odometers. They depend on the automobile’s electronics for establishing accurate mileage.

Electronic odometers, like mechanical ones, employ a special gear for changing the count seen on the dashboard. In addition, a magnetic sensor replaces the drive cable to track the gear turns in the transmission. The wires conduct the obtained signal to the car’s onboard computer that interprets and converts the data into mileage count.

The advantage of electronic odometers over mechanical ones is that they provide better accuracy. In addition, no one can manipulate electronic odometers easily, hence giving an accurate count of the vehicle’s mileage.

Odometers come with an additional trip meter called a trip odometer. It helps the car owners determine the mileage for any particular distance without interfering with the primary odometer reading.

Conclusion

The primary purpose of an odometer is to measure the distance travelled by the vehicle. In addition, odometer readings help determine various maintenance milestones such as tyre rotations, oil changes etc. Dealers use odometer readings to estimate the vehicle’s valuation in the used car market. Resetting odometer values require changing the entire transmission system of a car. Hence, odometer readings are very difficult to reset. Also, tampering with the reading is considered a fraud and punishable by law.

Additional Information

Mechanical odometers have been counting the miles for centuries. Although they are a dying breed, they are incredibly cool because they are so simple! A mechanical odometer is nothing more than a gear train with an incredible gear ratio.

The odometer we took apart for this article has a 1690:1 gear reduction! That means the input shaft of this odometer has to spin 1,690 times before the odometer will register 1 mile.

Odometers like this are being replaced by digital odometers that provide more features and cost less, but they aren't nearly as cool. In this article, we'll take a look inside a mechanical odometer, and then we'll talk about how digital odometers work.

Mechanical Odometers

Mechanical odometers are turned by a flexible cable made from a tightly wound spring. The cable usually spins inside a protective metal tube with a rubber housing. On a bicycle, a little wheel rolling against the bike wheel turns the cable, and the gear ratio on the odometer has to be calibrated to the size of this small wheel. On a car, a gear engages the output shaft of the transmission, turning the cable.

The cable snakes its way up to the instrument panel, where it is connected to the input shaft of the odometer.

The Gearing

This odometer uses a series of three worm gears to achieve its 1690:1 gear reduction. The input shaft drives the first worm, which drives a gear. Each full revolution of the worm only turns the gear one tooth. That gear turns another worm, which turns another gear, which turns the last worm and finally the last gear, which is hooked up to the tenth-of-a-mile indicator.

Each indicator has a row of pegs sticking out of one side, and a single set of two pegs on the other side. When the set of two pegs comes around to the white plastic gears, one of the teeth falls in between the pegs and turns with the indicator until the pegs pass. This gear also engages one of the pegs on the next bigger indicator, turning it a tenth of a revolution.

On the white wheel between the "3" and the "4," there are two pegs. One time per revolution, one of the gear teeth on the white gear falls in between these two pegs, causing the black gear next to it to move one-tenth of a revolution.

You can now see why, when your odometer "rolls over" a large number of digits (say from 19,999 to 20,000 miles), the "2" at the far left side of the display may not line up perfectly with the rest of the digits. A tiny amount of gear lash in the white helper gears prevents perfect alignment of all the digits. Usually, the display will have to get to 21,000 miles before the digits line up well again.

You can also see that mechanical odometers like this one are rewindable. In many older vehicles, driving in reverse could cause the mechanical odometer to run backward due to the straightforward gear mechanism. However, some mechanical odometers were equipped with mechanisms to prevent reverse counting, ensuring the mileage only increased regardless of the driving direction.

In the movie "Ferris Bueller's Day Off," in the scene where they have the car up on blocks with the wheels spinning in reverse -- that should've worked! In real life, the odometer would've turned back. Another trick is to hook the odometer's cable up to a drill and run it backwards to rewind the miles.

Computerized Odometers

If you make a trip to the bike shop, you most likely won't find any cable-driven odometers or speedometers. Instead, you will find bicycle computers. Bicycles with computers like these have a magnet attached to one of the wheels and a pickup attached to the frame. Once per revolution of the wheel, the magnet passes by the pickup, generating a voltage in the pickup. The computer counts these voltage spikes, or pulses, and uses them to calculate the distance traveled.

If you have ever installed one of these bike computers, you know that you have to program them with the circumference of the wheel. The circumference is the distance traveled when the wheel makes one full revolution. Each time the computer senses a pulse, it adds another wheel circumference to the total distance and updates the digital display.

Many modern cars use a system like this, too. Instead of a magnetic pickup on a wheel, they use a toothed wheel mounted to the output of the transmission and a magnetic sensor that counts the pulses as each tooth of the wheel goes by. Some cars use a slotted wheel and an optical pickup, like a computer mouse does. Just like on the bicycle, the computer in the car knows how much distance the car travels with each pulse, and uses this to update the odometer reading.

One of the most interesting things about car odometers is how the information is transmitted to the dashboard. Instead of a spinning cable transmitting the distance signal, the distance (along with a lot of other data) is transmitted over a single wire communications bus from the engine control unit (ECU) to the dashboard. The car is like a local area network with many different devices connected to it. Here are some of the devices that may be connected to the computer network in a car:

* Engine control unit (ECU)

* Climate control system

* Dashboard

* Power window controls

* Radio

* Anti-lock braking system

* Air bag control module

* Body control module (operates the interior lights, etc.)

* Transmission control module

Many vehicles use a standardized communication protocol, called SAE J1850, to enable all of the different electronics modules to communicate with each other.

The engine control unit counts all of the pulses and keeps track of the overall distance traveled by the car. This means that if someone tries to "roll back" the odometer, the value stored in the ECU will disagree. This value can be read using a diagnostic computer, which all car-dealership service departments have.

Several times per second, the ECU sends out a packet of information consisting of a header and the data. The header is just a number that identifies the packet as a distance reading, and the data is a number corresponding to the distance traveled. The instrument panel contains another computer that knows to look for this particular packet, and whenever it sees one it updates the odometer with the new value. In cars with digital odometers, the dashboard simply displays the new value. Cars with analog odometers have a small stepper motor that turns the dials on the odometer.

#14 Re: Dark Discussions at Cafe Infinity » crème de la crème » Yesterday 00:04:00

2458) Felix Bloch

Gist:

Life